You have some know-how of what a cycle. In a nonprofessional’s language, we can consider a cycle as a set of repetitive processes. However, from a technical viewpoint, we can transform software development into a set of processes and therefore term everything as a software development life cycle. Software Development Life Cycle, which is SDLC, technically means steps crucial in developing software applications. Each stage has a role to play and breaks down the entire software development process into manageable tasks. The tasks are measures, assigned, and completed concerning a set of development requirements.

Understanding the Software Development Life Cycle (SDLC)

SDLC is a set of standard business practices employed in software application development. The process scales down to around six to eight steps, and they randomly include requirements, planning, designing, documentation, building, testing, deployment, and maintenance. Nevertheless, the stages often rely on the project managers working on the software project. Some may omit some steps, while others may follow up the entire process to the latter. Therefore, it is crucial to understand that these steps serve as the essential components for developing any software product.

At some point, you may question why not prepare an application or software product without following these sets of processes. It is crucial to consider the software development life cycle because it serves as a measure of the software development process. With adherence to the set of operations, the final product is improved and reliable. SDLC is also good enough to ensure efficiency at each stage of development. With the higher demand for software, SDLC eases some of the burdens of software development, such as cost reduction, faster results, and achieving each of the customer’s needs.

Why SDLC Is Important for Businesses

A structured Software Development Life Cycle (SDLC) is not merely a technical framework; it is a business governance mechanism that protects investment, reduces uncertainty, and improves delivery predictability. Organizations that adopt a defined SDLC consistently demonstrate better cost control, higher product quality, and stronger regulatory alignment compared to those relying on ad hoc development practices. For enterprises operating in regulated or high-risk industries, SDLC is not optional. It is foundational to operational stability and long-term scalability.

-

Risk Reduction Through Structured Governance

Software projects fail most often due to unclear requirements, uncontrolled scope expansion, poor stakeholder alignment, or late-stage defect discovery. A disciplined SDLC reduces these risks by introducing structured checkpoints at every phase. Planning validates feasibility before resources are committed. Requirements definition ensures that business expectations are documented and traceable. Design reviews identify architectural weaknesses before coding begins. Testing frameworks prevent defective releases from reaching production environments.

Industry research from sources such as the Standish Group CHAOS reports consistently shows that projects with defined processes and governance structures have higher success rates than those without formal methodologies. When SDLC is properly implemented, risk is distributed across stages rather than concentrated at deployment. This staged risk management approach significantly lowers the probability of catastrophic failure.

For example, a healthcare information system handling electronic medical records cannot afford ambiguous specifications. A missing validation rule or untracked data modification could result in compliance violations or patient safety risks. A structured SDLC ensures that requirement traceability matrices, validation testing, and audit logging mechanisms are defined early and verified systematically before go-live.

-

Budget Predictability and Cost Control

One of the most overlooked advantages of SDLC is financial predictability. Unstructured development often leads to rework, delayed timelines, and unplanned scope expansion. The earlier a defect or misunderstanding is identified, the less expensive it is to correct. According to widely cited IBM Systems Sciences Institute findings, fixing a defect in production can cost 15 to 50 times more than correcting it during the design phase. While cost multipliers vary by project type, the principle remains consistent: late discovery increases cost exponentially.

SDLC introduces milestone-based planning and effort estimation. During the planning phase, organizations evaluate labor costs, infrastructure needs, licensing requirements, and contingency buffers. This enables more accurate budgeting and phased investment allocation. Stakeholders gain financial transparency, which is particularly important for enterprise procurement processes and board-level approvals.

Consider a fintech platform integrating payment gateways and fraud detection systems. Without structured requirement validation and integration testing phases, regulatory or technical gaps may surface post-launch, forcing expensive emergency fixes. A defined SDLC reduces such financial volatility by identifying integration risks before production deployment.

-

Requirement Clarity and Traceability

Requirement ambiguity is one of the leading causes of software rework. SDLC enforces requirement documentation through structured artifacts such as Software Requirement Specifications (SRS) and business requirement documents. This ensures that functional and non-functional requirements are clearly defined, approved, and traceable throughout development.

Requirement traceability matrices allow teams to map each requirement to its corresponding design component, development task, and test case. This traceability ensures that no business objective is lost during implementation. It also simplifies impact analysis when changes occur.

For instance, a SaaS startup building a subscription-based analytics platform may initially define basic dashboard capabilities. During iterative releases, customers request additional reporting filters or role-based access controls. With structured SDLC governance, new requirements are formally evaluated, prioritized, and integrated into controlled release cycles rather than disrupting core architecture. This maintains system stability while enabling growth.

-

Regulatory Compliance and Audit Readiness

Industries such as healthcare, finance, insurance, and government operate under strict regulatory frameworks. Compliance standards such as HIPAA, GDPR, PCI-DSS, and SOC 2 require documented controls, security validation, and audit trails. SDLC provides the framework to embed compliance considerations into every development phase.

In healthcare systems, for example, audit logging is not merely a feature; it is a regulatory necessity. Systems must track who accessed patient records, when modifications occurred, and how data was transmitted. A secure SDLC ensures that these compliance requirements are defined during the requirements phase, architected during design, implemented with secure coding practices, and verified through validation testing.

Similarly, fintech applications require transaction traceability, encryption validation, identity verification mechanisms, and data retention controls. Compliance validation cannot be treated as an afterthought. SDLC enforces early inclusion of security and compliance reviews, reducing the risk of regulatory penalties or reputational damage.

-

Quality Assurance Governance

Quality assurance is not limited to functional testing. In enterprise environments, it includes performance validation, security testing, integration testing, usability verification, and regression analysis. SDLC formalizes QA as a parallel process rather than a final-stage activity.

Modern SDLC frameworks incorporate automated testing pipelines, continuous integration practices, and structured release validation procedures. This ensures consistent quality across environments and releases. Without lifecycle governance, testing often becomes reactive, leading to unstable releases and frequent hotfixes.

For example, a SaaS company releasing weekly updates must ensure that new features do not break existing workflows. A structured SDLC integrates automated regression testing and release checkpoints, enabling rapid iteration without sacrificing reliability. This is particularly critical in subscription-based models where uptime and trust directly impact revenue retention.

-

Stakeholder Alignment and Transparency

Software projects involve multiple stakeholders: executive sponsors, product owners, compliance teams, developers, QA engineers, and end users. Misalignment among these groups often leads to delays and dissatisfaction. SDLC introduces structured review stages and sign-off processes, ensuring shared understanding at every milestone.

During planning, business objectives are clarified. During requirement definition, stakeholders validate expected outcomes. During testing, user acceptance testing confirms that delivered functionality meets business needs. This structured collaboration reduces conflict and accelerates approval cycles.

In enterprise SaaS organizations, release governance is critical. Rapid iteration is necessary for competitiveness, yet releases must be documented and controlled. A disciplined SDLC balances agility with oversight by defining sprint cycles, release gates, and deployment validation procedures.

-

Strategic Impact on Long-Term Scalability

Beyond operational control, SDLC directly influences long-term scalability and maintainability. Systems built without architectural foresight often accumulate technical debt, leading to performance bottlenecks and expensive refactoring. Structured design reviews and documentation standards mitigate this risk.

Organizations planning digital transformation initiatives, cloud migrations, or AI integrations benefit significantly from lifecycle governance. A clearly documented architecture and requirement traceability framework makes future expansion more predictable and less disruptive.

For modern enterprises, SDLC is not a theoretical framework but a strategic business instrument. It reduces risk, improves budget control, clarifies requirements, embeds compliance, strengthens quality governance, and aligns stakeholders around measurable objectives. Whether building a healthcare information system requiring audit validation, a fintech application demanding compliance verification, or a SaaS platform balancing rapid iteration with release governance, a structured SDLC is fundamental to delivering reliable, scalable, and commercially viable software solutions.

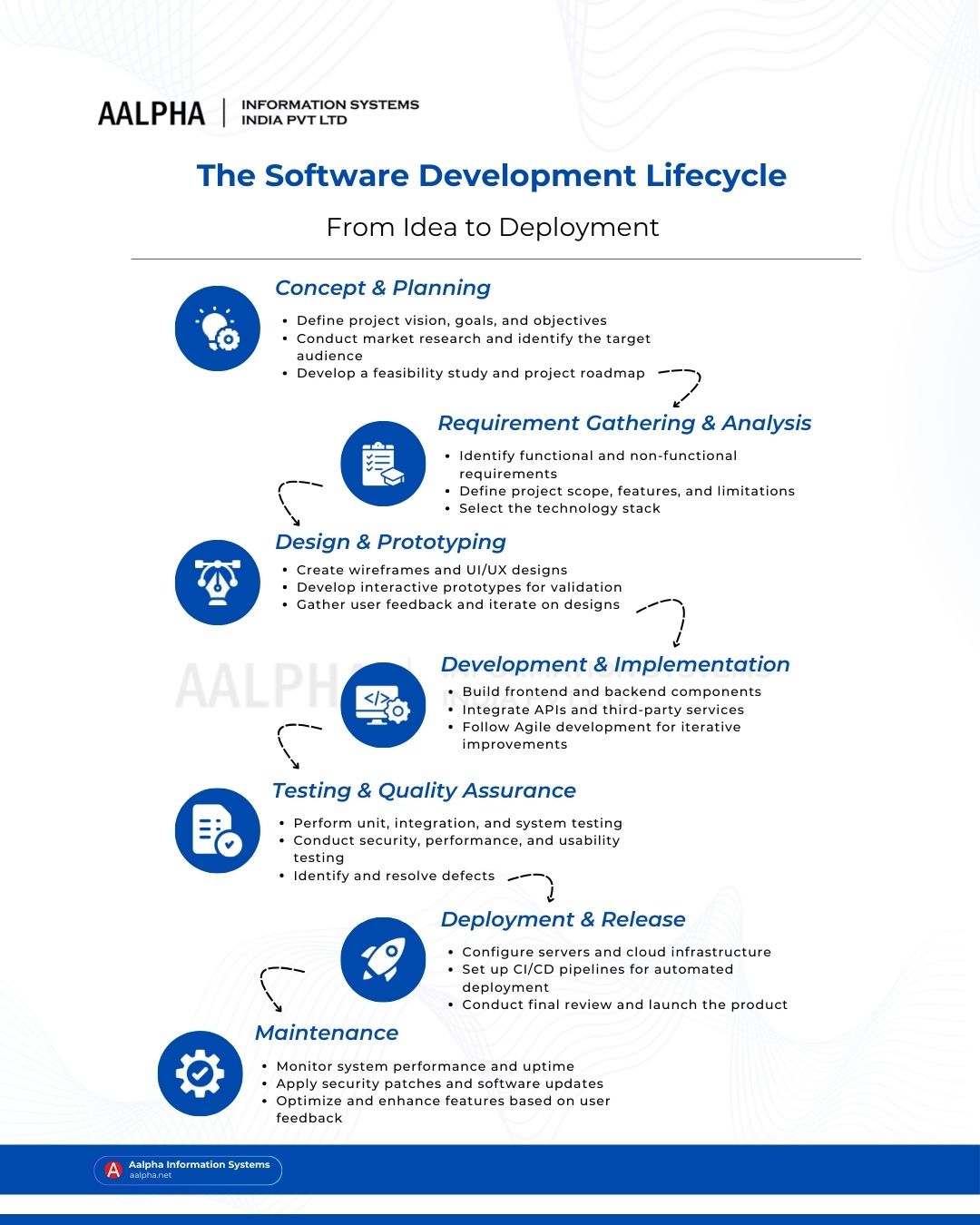

Software development life cycle phases

With the software development life cycle, software developers outline each task that conjunctively results in the development of a software application—following such an approach reduces wastage and enhances efficiency in the entire process of software development. As the project progresses, it is essential to consider monitoring where both the client and the development team monitor it to ensure it is on the right path.

Each step of software development may undergo a further breakdown into smaller manageable units. For instance, we can break the process into around three manageable subunits in the planning stage, including marketing research, cost-effective analysis, and technology research. While some steps divide further, some may conjointly work together to make the software development process a success. For instance, the development phase can run hand in hand with the testing phase, where software developers run testing concurrently with development to eliminate errors right at the development stage. That aside, the following are the 7 phases of software development life cycle:

Step 1 : Planning

It is a crucial phase where project managers and other critical team members assemble to evaluate and understand the project terms. The entire stage calls for a critical examination of material costs, estimating the labor costs, leadership structure, creating a plan with manageable goals, among many others. Stakeholders also come in at this stage, and they stand as the benefit from the application. It is also essential to source feedback from potential customers, other related experts, and developers. While planning for software development, it is crucial to understand the project scope and the underlying reason for developing the given software. The stage defines a boundary for the project – the project cannot stretch beyond its specified limits unless otherwise.

Step 2 : Requirements definition

It is significant to establish the project requirements before quickly going to the developmental stage. You will not develop a given software without understanding its requirements. At the requirement definition stage, you need to establish what the software or application intends to do and, after that, try to verify its possible conditions. For instance, if you want to develop a social media application, some requirement factors are crucial. Through the application, users must reach out to others without strain. Therefore, you should consider features or requirements that enable users to connect easily. The stage also entails defining resources crucial for the project.

Step 3 : Design and Prototyping

After defining the requirements, you have to design the application or prepare a prototype to understand more of the project’s needs. At this phase, the programmers and software developers create a postulated software or program’s working model. The following aspects are crucial during this stage: architecture, which specifies essential features such as a functional programming language, user interface, which defines customer interaction with the software, development platforms, communication, security, programming, and much more.

Prototyping may also feature in the design stage. It constitutes creating a prototype (a toy of the existing software) in the iterative software development model. With a porotype, users understand the basic idea behind the intended application and provide feedback regarding what needs improvement and what needs optimization. Using the prototyping approach tends to lower costs related to software development. It is easier to change a prototype to fit user needs than to rewrite code to incorporate a change in this phase.

Check: best prototyping tools

Step 4 : Software development

After creating and understanding the program’s design or postulated software, it is now essential to consider writing the actual program. Single or few developers may work on a simple project, while a complex project calls for many developers and breaking down the task into manageable bits. Access control tools are crucial in this phase because they help software developers to monitor and track changes made in the source code. Such essentials are also vital for ensuring compatibility between projects to meet specific target goals. The phase incorporates coding, which entails error and glitch fixation. Documentation is also crucial, and software developers appreciate the use of instructions and comments on how the software works, its limitations, etc. However, documentation can be formal or informal and therefore depends on the type of development team.

Step 5 : Testing

It is also a crucial phase to establish whether or not the software runs as required. Usually, most testing undergoes automation. Testing is also critical to show whether the software works correctly in different environments. Some software testing takes place in specific settings, and therefore, developers should consider stimulated production environments to serve the purposes of complex deployments. At the testing phase, software programmers and developers should ensure that each software unit works properly. Several parts of the program also undergo testing to establish the level to which they can work together to reduce hangs and ensure effective processes. Performance testing reduces glitches and bugs residing within the program or software.

Step 6 : Deployment

At this stage, the software development team renders the software usable and presents it to the intended users. There are many ways to institute deployment and among them is the automation of the entire process. It is a crucial approach because of the simplification of otherwise complex tasks.

Step 7 : Operations and Maintenance

Once the software is already in use, programmers need to ensure its consistency in serving the user’s needs. Therefore, the software calls for maintenance. In the usage process, customers may discover bugs that may have possibly bypassed the process of testing and bug fixation. The development team should ensure consistent upgrades and continued maintenance.

Check: software maintenance services

Types of Software Development Life Cycle (SDLC) Models

The Software Development Life Cycle is not a single rigid methodology. It is a structured framework within which different development models operate. Each SDLC model defines how phases such as planning, design, development, testing, and deployment are organized and executed. The choice of model directly affects project risk, flexibility, documentation intensity, compliance alignment, and delivery speed.

Below are the most widely adopted SDLC models in modern software engineering.

1. Waterfall Model

The Waterfall Model is the earliest and most traditional SDLC model. It follows a strictly linear and sequential approach where each phase must be completed before the next one begins. There is no overlap between stages, and backward movement is limited.

In a Waterfall structure, the flow typically moves in this order: Requirements → Design → Development → Testing → Deployment → Maintenance.

The strength of this model lies in its predictability. Because requirements are defined in detail at the beginning, scope changes are minimized. Documentation is comprehensive, and approval checkpoints are clearly defined. This makes Waterfall particularly suitable for projects where requirements are stable and unlikely to change.

Best suited for:

- Government contracts

- Defense systems

- Enterprise compliance-driven applications

- Large infrastructure projects with fixed scope

For example, a government procurement system with predefined compliance specifications benefits from Waterfall because regulatory requirements must be locked before development begins. However, the model performs poorly in environments where user requirements evolve rapidly.

2. V-Model (Verification and Validation Model)

The V-Model extends the Waterfall approach by introducing parallel verification and validation activities. Each development phase has a corresponding testing phase. For example, system design aligns with system testing, and module design aligns with unit testing.

The “V” shape visually represents how development activities on the left side correspond to testing activities on the right side.

This model emphasizes early test planning. Testing is not postponed until after coding; it is designed simultaneously with requirements and architecture.

Best suited for:

- Healthcare software systems

- Financial systems

- Aviation and embedded systems

- Safety-critical applications

In regulated industries such as healthcare, validation evidence is mandatory. A hospital management system, for instance, must demonstrate traceability between requirements and test cases. The V-Model supports this requirement through structured validation checkpoints.

3. Agile Model

The Agile Model is an iterative and incremental approach that prioritizes adaptability and continuous feedback. Instead of delivering the entire system at once, development occurs in short cycles known as sprints, typically lasting two to four weeks.

Each sprint includes planning, development, testing, and review phases. Feedback is incorporated immediately into subsequent iterations.

Agile emphasizes collaboration, customer involvement, and responsiveness to change. Documentation exists but is lighter compared to Waterfall.

Best suited for:

- SaaS startups

- Consumer applications

- eCommerce platforms

- AI-powered products

For example, a SaaS analytics platform may release core reporting features in Sprint 1, advanced filtering in Sprint 2, and performance improvements in Sprint 3. Continuous user feedback shapes product evolution without waiting for full project completion.

4. Iterative Model

The Iterative Model focuses on building a basic version of the system first and then refining it through repeated cycles. Each iteration improves functionality based on feedback and evaluation.

Unlike Agile, which emphasizes structured sprint ceremonies, the Iterative model is more flexible and does not necessarily follow strict sprint timelines.

The product evolves progressively. Each cycle includes requirement refinement, design adjustments, development, and testing.

Best suited for:

- Complex systems with unclear initial requirements

- R&D-heavy projects

- AI or machine learning systems

In AI development, for instance, models are trained, evaluated, adjusted, and retrained multiple times. The iterative approach supports experimentation and continuous refinement.

5. Spiral Model

The Spiral Model is a risk-driven development model combining iterative development with systematic risk analysis. Each cycle, or “spiral,” includes four main activities: planning, risk assessment, development, and evaluation.

Risk analysis is central to this model. Before progressing to the next spiral, potential technical, financial, or operational risks are identified and mitigated.

Best suited for:

- Large enterprise systems

- Defense and aerospace software

- High-budget, high-risk R&D initiatives

For example, a defense-grade surveillance system involving hardware integration and cybersecurity risks benefits from Spiral because it prioritizes risk identification before scaling development.

6. DevOps Model

The DevOps Model extends SDLC into continuous delivery and operations. It emphasizes automation, collaboration between development and operations teams, and CI/CD pipelines.

The DevOps lifecycle is often represented as an infinity loop symbolizing continuous integration, testing, deployment, monitoring, and feedback.

Automation plays a critical role. Code changes trigger automated builds, automated testing, and automated deployments. Monitoring tools feed production insights back into development.

Best suited for:

- Cloud-native applications

- SaaS platforms with frequent releases

- Microservices architectures

- Enterprise systems requiring high uptime

A SaaS company deploying weekly updates relies on DevOps practices to maintain release stability while accelerating feature delivery.

7. Big Bang Model

The Big Bang Model involves minimal planning and limited documentation. Developers start coding based on a general understanding of requirements. There is little formal process or defined lifecycle governance.

This model carries significant risk. It may succeed in small experimental projects but often fails in large-scale or regulated environments.

Best suited for:

- Small proof-of-concept projects

- Academic research prototypes

- Internal experimental tools

Because of its unpredictability, the Big Bang approach is rarely recommended for enterprise software systems.

Choosing the Right SDLC Model

There is no universally “best” SDLC model. The correct choice depends on regulatory constraints, project complexity, stakeholder involvement, risk tolerance, and release frequency.

- A healthcare system requiring audit traceability benefits from V-Model.

- A fintech platform under regulatory oversight may combine Waterfall governance with DevSecOps automation.

- A SaaS startup aiming for rapid iteration typically adopts Agile with DevOps integration.

- A high-risk defense initiative may require Spiral for structured risk management.

Selecting the right SDLC model is a strategic decision that directly influences cost, compliance, scalability, and long-term maintainability.

Modern SDLC Practices: How the Software Development Lifecycle Has Evolved

The traditional Software Development Life Cycle was once characterized by long planning cycles, isolated development teams, delayed testing, and infrequent releases. That model is no longer sufficient for SaaS platforms, AI-driven systems, cloud-native applications, or enterprise digital transformation initiatives. Modern SDLC has evolved into a continuous, automated, security-aware, and data-driven lifecycle that integrates development, operations, security, and monitoring into a unified workflow.

Organizations that still treat SDLC as a linear sequence of documentation and delayed testing struggle to compete in environments where releases occur weekly or even daily. The modern SDLC integrates automation, infrastructure abstraction, real-time monitoring, and AI-assisted engineering to deliver reliable software at scale.

Below are the core practices defining contemporary SDLC frameworks.

-

CI/CD Pipelines: Continuous Integration and Continuous Delivery

Continuous Integration and Continuous Delivery have transformed how software moves from development to production. Instead of manually packaging and deploying releases, CI/CD pipelines automate build validation, testing, artifact creation, and deployment.

In a CI workflow, developers frequently merge code into a shared repository. Each commit triggers automated builds and test suites. This reduces integration conflicts and identifies defects early. Continuous Delivery extends this by automatically preparing code for deployment, ensuring that production-ready artifacts are always available.

For SaaS platforms, CI/CD enables rapid iteration without compromising stability. A subscription-based analytics platform, for example, may deploy weekly feature enhancements. Automated pipelines validate changes, run regression tests, and deploy to staging environments before promotion to production. This reduces release risk while accelerating time to market.

Enterprise systems increasingly rely on pipeline governance with approval gates, automated compliance checks, and rollback mechanisms to ensure stability across complex environments.

-

Infrastructure as Code (IaC)

Infrastructure is no longer provisioned manually. Infrastructure as Code allows organizations to define servers, networks, containers, and cloud resources using declarative configuration files.

Using tools such as Terraform, AWS CloudFormation, or similar platforms, teams can version-control infrastructure configurations just like application code. This ensures environment consistency across development, staging, and production.

In modern SDLC, infrastructure provisioning becomes part of the lifecycle. Environments are automatically created, tested, and destroyed as needed. This eliminates configuration drift and reduces deployment inconsistencies.

For example, an enterprise SaaS provider deploying microservices across multiple regions benefits from Infrastructure as Code by ensuring consistent resource configuration globally. It also improves disaster recovery readiness because infrastructure definitions are reproducible.

-

DevSecOps: Security Integrated into the Lifecycle

Traditional SDLC often treated security as a final checkpoint before deployment. Modern SDLC integrates security at every stage through DevSecOps practices.

Security testing now includes:

- Static Application Security Testing during development

- Dynamic security testing in staging environments

- Automated vulnerability scanning in CI pipelines

- Dependency scanning for third-party libraries

- Container security validation

In fintech systems handling payment transactions, security validation must occur continuously. Automated scanning identifies vulnerable libraries before they reach production. Compliance requirements such as PCI-DSS or SOC 2 increasingly demand documented security validation within the development lifecycle.

DevSecOps reduces the likelihood of costly post-deployment breaches and strengthens regulatory compliance posture.

-

Automated Testing and Quality Engineering

Testing has evolved from manual end-stage validation to continuous automated verification. Modern SDLC includes multiple automated testing layers:

- Unit testing for individual components

- Integration testing for service communication

- Regression testing for release stability

- Performance testing for scalability

- Security testing for vulnerability detection

Automation frameworks allow thousands of test cases to execute in minutes. This supports frequent releases without sacrificing reliability.

In enterprise systems, automated testing is essential for maintaining stability across large codebases. For SaaS applications with active user bases, automated regression testing ensures that new feature deployments do not disrupt existing workflows.

-

Continuous Monitoring and Observability

Deployment is no longer the end of the lifecycle. Modern SDLC includes real-time monitoring and observability as continuous feedback mechanisms.

Monitoring tools track:

- Application performance

- Error rates

- Latency metrics

- Infrastructure utilization

- Security incidents

Observability platforms aggregate logs, traces, and metrics to identify system bottlenecks or anomalies. This operational data feeds directly back into development planning.

For example, a cloud-based CRM platform may detect increased response latency under peak load. Monitoring insights inform architectural improvements in subsequent development cycles. This data-driven feedback loop defines the modern lifecycle.

-

Shift-Left Testing

Shift-left testing moves quality assurance earlier in the lifecycle. Instead of waiting until after development is complete, testing activities begin during requirements and design phases.

This includes:

- Requirement validation workshops

- Design reviews with QA involvement

- Early prototype testing

- Automated unit testing integrated into development workflows

By detecting issues early, organizations significantly reduce defect resolution costs. In regulated industries such as healthcare, early validation ensures compliance alignment before extensive development investment occurs.

Shift-left principles reinforce the concept that quality is engineered, not inspected.

-

AI-Assisted Development

Artificial Intelligence is increasingly embedded in modern SDLC workflows. AI-powered tools assist developers in code generation, error detection, vulnerability identification, and documentation.

AI-assisted development improves productivity while reducing repetitive coding tasks. However, governance remains critical. Enterprises must validate AI-generated code for security and performance standards.

In AI-driven systems themselves, the lifecycle extends to include model training, evaluation, monitoring, and retraining processes. This introduces concepts such as MLOps, where machine learning pipelines operate alongside application deployment pipelines.

Organizations building AI products must manage not only code releases but also model lifecycle management, bias monitoring, and dataset validation.

-

Cloud-Native Development Lifecycle

Modern SDLC increasingly operates in cloud-native environments. Applications are built using containerized architectures, microservices, and managed cloud services.

Cloud-native lifecycle characteristics include:

- Container orchestration

- Horizontal scalability

- Service mesh communication

- API-driven integration

- Distributed system monitoring

Cloud-native systems require enhanced observability and automation because components operate independently. Release strategies such as blue-green deployment and canary releases allow controlled rollouts with minimal downtime.

For SaaS platforms serving global users, cloud-native SDLC ensures scalability, resilience, and rapid recovery from failures.

How SDLC Has Evolved Across Industries

- SaaS Systems

SaaS organizations prioritize rapid iteration, continuous delivery, and uptime reliability. Agile methodologies combined with DevOps automation dominate. Monitoring and automated testing ensure stable frequent releases.

- AI and Machine Learning Platforms

AI systems extend SDLC into model lifecycle management. Data validation, training pipelines, inference monitoring, and bias auditing become part of the development process. Iterative experimentation and controlled retraining cycles are essential.

- Enterprise Systems

Enterprises combine structured governance with automation. Compliance requirements require documentation and traceability, while CI/CD ensures competitive delivery speed. Hybrid SDLC models blending Agile, DevOps, and compliance oversight are common.

Strategic Impact of Modern SDLC

Modern SDLC is no longer a documentation-heavy sequence of stages. It is an integrated ecosystem combining development, security, operations, automation, and monitoring. Organizations that adopt modern lifecycle practices achieve:

- Faster release cycles

- Improved product reliability

- Enhanced compliance readiness

- Lower long-term maintenance costs

- Greater scalability and operational resilience

For technology partners delivering enterprise-grade solutions, demonstrating mastery of modern SDLC practices is essential. It signals technical maturity, governance discipline, and the ability to build scalable, secure, and future-ready software systems aligned with contemporary engineering standards.

SDLC Best Practices for Building Reliable, Scalable Software

A well-defined Software Development Life Cycle provides structure, but structure alone does not guarantee success. Execution quality determines outcomes. Organizations that consistently deliver secure, scalable, and high-performing software follow disciplined SDLC best practices across every phase. These practices strengthen governance, reduce rework, and improve long-term maintainability.

Below are the core best practices that define mature software engineering environments.

-

Early Stakeholder Involvement

Successful SDLC execution begins with early and continuous stakeholder engagement. Stakeholders include business sponsors, product owners, end users, compliance officers, architects, and operations teams. Their involvement during planning and requirement definition ensures that business objectives, regulatory constraints, and operational realities are clearly understood before development begins.

When stakeholders are engaged late in the lifecycle, requirement misalignment often surfaces during testing or post-deployment. This results in rework, delayed timelines, and budget overruns. Early involvement enables validation workshops, use-case mapping sessions, and risk identification exercises before code is written.

For example, in a healthcare software system, compliance teams must confirm data retention and audit logging requirements during the requirement phase, not after deployment. In SaaS platforms, early user feedback during prototyping prevents feature misalignment and increases adoption rates.

Stakeholder alignment reduces ambiguity and ensures shared ownership of outcomes.

-

Clear and Structured Documentation

Documentation is a governance tool, not merely an administrative formality. Clear documentation enhances traceability, onboarding efficiency, regulatory readiness, and long-term maintainability.

Mature SDLC environments maintain structured artifacts such as:

- Business Requirement Documents

- Software Requirement Specifications

- High-Level and Low-Level Design Documents

- API specifications

- Test plans and test cases

- Deployment procedures

- Maintenance logs

Well-maintained documentation allows teams to perform impact analysis when changes occur. It also ensures continuity when team members transition. In regulated industries such as fintech or healthcare, documentation is essential for compliance audits.

Clear documentation reduces institutional dependency on individuals and transforms software projects into controlled, repeatable processes.

-

Continuous Testing and Quality Engineering

Testing must be continuous and integrated into every development stage. Mature SDLC practices embed automated testing into development workflows to prevent defect accumulation.

Continuous testing includes:

- Unit testing during coding

- Integration testing for service interactions

- Regression testing before each release

- Performance testing under simulated load

- Security validation checks

By identifying issues early, organizations reduce defect resolution costs and avoid production instability. In cloud-native SaaS systems, automated regression testing ensures that frequent releases do not disrupt user workflows.

Quality engineering is proactive. It focuses on preventing defects rather than detecting them late in the lifecycle.

-

Version Control Discipline

Version control is foundational to collaborative development. A disciplined version control strategy ensures traceability, accountability, and controlled release management.

Best practices include:

- Using distributed version control systems

- Implementing structured branching strategies

- Enforcing peer code reviews

- Maintaining descriptive commit histories

- Protecting production branches with approval workflows

Version control discipline supports auditability and rollback capability. In enterprise environments, it allows teams to track who made changes, when they were introduced, and why they were implemented.

Without strong version control governance, collaboration becomes chaotic and risk increases significantly.

-

Formal Risk Assessment

Risk management must be systematic rather than reactive. Each SDLC phase should include structured risk evaluation covering technical, operational, financial, and compliance risks.

Effective risk assessment includes:

- Feasibility studies during planning

- Architectural risk analysis during design

- Security risk evaluation during development

- Operational risk reviews before deployment

For example, integrating a third-party payment processor introduces dependency risks. Evaluating uptime guarantees, API stability, and data protection controls during planning mitigates potential disruption.

In large enterprise or defense projects, risk registers and mitigation strategies are maintained throughout the lifecycle. This structured approach improves predictability and reduces unexpected failures.

-

Security-First Design

Security cannot be appended at the end of development. It must be embedded into system architecture and coding practices from the outset.

Security-first SDLC includes:

- Threat modeling during design

- Secure coding standards

- Encryption planning for data at rest and in transit

- Identity and access control architecture

- Automated vulnerability scanning

- Compliance alignment validation

For fintech platforms handling sensitive financial transactions, secure design ensures encryption protocols and identity verification systems are built into the architecture. For healthcare systems managing patient records, access logging and audit traceability must be engineered during design, not retrofitted later.

Security-first thinking reduces breach risks and strengthens regulatory compliance posture.

-

Performance Benchmarking and Scalability Planning

Performance must be measured and validated against defined benchmarks. Modern applications operate in distributed cloud environments where performance bottlenecks can significantly impact user experience and revenue.

Performance benchmarking includes:

- Load testing under expected peak conditions

- Stress testing beyond anticipated limits

- Latency measurement across services

- Database query optimization

- Infrastructure capacity planning

For example, an eCommerce platform expecting seasonal traffic spikes must validate scalability before major sales events. Without benchmarking, sudden traffic surges can lead to downtime and lost revenue.

Scalability planning ensures systems remain stable as user bases grow. Incorporating benchmarking into SDLC transforms performance into a measurable engineering objective rather than a reactive fix.

Integrating Best Practices Across the Lifecycle

These best practices are not isolated activities. They reinforce each other across the lifecycle:

- Early stakeholder involvement improves requirement clarity.

- Clear documentation enhances traceability and compliance readiness.

- Continuous testing strengthens release reliability.

- Version control discipline ensures accountability.

- Risk assessment improves predictability.

- Security-first design protects data integrity.

- Performance benchmarking ensures scalability.

Organizations that institutionalize these practices create a repeatable delivery framework capable of supporting enterprise digital transformation, SaaS product scaling, and AI-driven innovation.

A mature SDLC is not defined by phases alone. It is defined by disciplined execution, measurable governance, and continuous improvement grounded in engineering rigor.

Conclusion:

Digital transformation is not simply about adopting new technologies. It is about building reliable, scalable, secure software systems that support evolving business models, regulatory environments, and customer expectations. A structured Software Development Life Cycle is the foundation that enables this transformation to succeed.

Organizations undergoing cloud migration, building AI-powered platforms, modernizing legacy systems, or launching SaaS products cannot rely on unstructured development practices. Without lifecycle governance, digital initiatives often suffer from scope drift, security gaps, unstable releases, and escalating maintenance costs. A disciplined SDLC ensures that transformation efforts are predictable, compliant, and strategically aligned with business objectives.

Structured SDLC matters because it:

- Reduces operational and financial risk

- Aligns technology initiatives with measurable business goals

- Embeds security and compliance into system design

- Enables scalable architecture for future growth

- Supports continuous innovation without sacrificing stability

In enterprise environments, digital systems frequently integrate multiple services, cloud infrastructures, APIs, data pipelines, and security layers. Coordinating these components requires more than coding expertise. It requires governance discipline, architectural foresight, and process maturity. A well-executed SDLC transforms complexity into a controlled, measurable development framework.

The Role of an Experienced Engineering Partner

While many organizations understand the importance of SDLC, effective implementation demands experience. Lifecycle governance must balance structure with adaptability. It must combine documentation rigor with agile responsiveness. It must integrate security, performance validation, CI/CD automation, and stakeholder oversight into a unified delivery model.

Enterprises need engineering partners who understand how to tailor SDLC frameworks based on industry requirements, compliance obligations, and business scale. Healthcare systems require audit traceability and validation alignment. Fintech platforms demand secure transaction processing and regulatory documentation. SaaS businesses require rapid iteration supported by DevOps automation and release governance.

An experienced software development company brings:

- Proven lifecycle governance frameworks

- Mature documentation and traceability standards

- Integrated DevSecOps practices

- Structured risk assessment methodologies

- Scalable cloud-native architecture expertise

- Continuous testing and monitoring integration

These capabilities are not incidental; they are the result of disciplined process design and real-world implementation across industries.

Aalpha’s Structured SDLC Approach

As an experienced software development company, Aalpha Information Systems applies structured SDLC governance across every engagement. Projects are initiated with detailed requirement analysis and feasibility validation. Architecture design incorporates security-first principles and scalability planning. Development is supported by version control discipline, CI/CD automation, and continuous testing practices. Deployment and post-release monitoring ensure operational stability and long-term maintainability.

This structured approach allows Aalpha to support enterprise digital transformation initiatives with predictability and technical depth. Whether building complex enterprise systems, modern SaaS platforms, or AI-enabled applications, lifecycle governance ensures that each solution is secure, scalable, compliant, and aligned with measurable business outcomes.

In a technology landscape defined by rapid change, disciplined SDLC execution remains the anchor that enables innovation without compromising reliability. Organizations seeking to modernize their digital infrastructure benefit from partnering with engineering teams that combine technical expertise with structured lifecycle governance.

To discuss how a structured SDLC framework can support your next software initiative, contact Aalpha Information Systems and explore a development approach grounded in engineering rigor and enterprise-grade delivery standards.