Artificial intelligence is no longer limited to modern cloud-native platforms or newly built enterprise applications. Organizations across industries are increasingly integrating AI into legacy systems to improve operational efficiency, automate repetitive processes, strengthen decision-making, and remain competitive in rapidly changing markets. Many enterprises still rely on decades-old software systems that continue to handle mission-critical operations such as financial transactions, inventory management, manufacturing workflows, patient records, and logistics coordination. Replacing these systems entirely is often expensive, risky, and operationally disruptive. As a result, businesses are looking for practical ways to introduce AI capabilities without rebuilding their entire technology infrastructure from scratch.

The demand for AI-powered modernization has grown significantly as enterprises face increasing pressure to reduce operational costs, improve customer experiences, and respond faster to market changes. AI technologies such as machine learning, natural language processing, predictive analytics, and generative AI can now be layered on top of existing systems through APIs, middleware, integration platforms, and hybrid architectures. This approach allows companies to modernize gradually while protecting previous technology investments.

From banks using AI-driven fraud detection on top of legacy core banking platforms to manufacturers deploying predictive maintenance models connected to older industrial systems, AI integration is becoming a strategic modernization path rather than a complete replacement initiative. Businesses that successfully integrate AI into legacy environments can extend the lifespan of their infrastructure, unlock valuable data insights, and create more scalable digital operations without causing major disruptions to ongoing business activities.

What Are Legacy Systems?

Legacy systems are older software applications, hardware infrastructures, or enterprise platforms that organizations continue using despite newer technologies being available. These systems often support core business functions and contain years or even decades of operational data, workflows, and integrations that businesses rely on daily. Legacy software may include mainframe applications, monolithic enterprise systems, outdated ERP platforms, desktop-based operational tools, or on-premise databases developed using older programming languages and architectures.

Many industries still depend heavily on legacy infrastructure. In banking, core transaction systems built decades ago continue processing millions of financial operations every day. Healthcare providers often rely on older electronic medical record systems. Manufacturing companies use aging industrial control systems and production software, while logistics companies maintain legacy supply chain and warehouse management platforms. Retail businesses may still operate older inventory and point-of-sale systems connected to multiple store locations.

Despite their limitations, enterprises continue using these systems because they are stable, deeply integrated into business operations, and expensive to replace. Full modernization projects can take years, involve major operational risks, and require significant financial investment. For many organizations, enhancing existing systems with AI capabilities becomes a more practical and lower-risk strategy than complete replacement.

Why Businesses Want AI in Existing Systems

Businesses are integrating AI into existing systems because they want to improve operational performance without disrupting core business infrastructure. Many legacy environments still handle essential business operations effectively, but they lack the intelligence, automation, and real-time analytical capabilities modern organizations now require. AI helps bridge this gap by adding advanced decision-making and automation layers on top of existing enterprise software.

One major driver is process automation. Enterprises use AI to automate repetitive tasks such as invoice processing, customer support ticket routing, document classification, claims processing, and data entry. This reduces manual workloads and allows employees to focus on higher-value activities. Predictive analytics is another important motivation. Organizations want AI systems that can forecast demand, detect fraud, predict equipment failures, optimize inventory, and identify operational risks using historical enterprise data already stored inside legacy systems.

Customer experience improvement is also accelerating AI adoption. Companies are integrating AI-powered chatbots, recommendation systems, voice assistants, and personalized customer interactions into older CRM and support platforms. At the same time, businesses seek operational efficiency improvements through faster workflows, better data visibility, and intelligent process optimization.

Cost reduction remains a critical factor as well. Replacing an entire enterprise infrastructure can cost millions of dollars and create major downtime risks. AI integration offers a more cost-effective modernization path by allowing businesses to enhance current systems incrementally instead of rebuilding everything from the ground up.

The Growing Enterprise AI Adoption Trend

Enterprise AI adoption has accelerated rapidly as organizations recognize AI as a core business transformation technology rather than an experimental innovation. Companies across finance, healthcare, manufacturing, retail, logistics, and enterprise software are investing heavily in AI-driven automation, analytics, and intelligent operations to improve competitiveness and operational efficiency.

The rise of AI-first companies has also increased pressure on traditional enterprises. Newer businesses are launching with cloud-native AI architectures, automated workflows, and data-driven decision systems that allow them to operate faster and more efficiently than organizations dependent entirely on older infrastructure. This competitive shift is forcing established enterprises to modernize their technology stacks while maintaining business continuity.

At the same time, organizations are realizing that full system replacement is not always necessary to adopt AI successfully. Instead of abandoning decades of infrastructure investment, many enterprises are implementing hybrid modernization strategies that combine legacy systems with AI-powered services, APIs, cloud platforms, and automation tools. This gradual modernization approach reduces operational risk while still enabling businesses to benefit from modern AI capabilities.

The rapid advancement of generative AI, machine learning platforms, and enterprise AI integration tools has made AI adoption more accessible than ever before. Businesses can now deploy AI models alongside existing systems faster, cheaper, and with less disruption compared to traditional large-scale digital transformation projects.

Can AI Work with Old Software Systems?

Yes, AI can work effectively with old software systems, and many enterprises are already doing this successfully. Modern AI integration does not always require organizations to replace their existing infrastructure. Instead, AI capabilities can often be connected to legacy systems through APIs, middleware platforms, robotic process automation (RPA), connectors, microservices, and hybrid cloud architectures.

For example, a bank using an older core banking platform can integrate AI-based fraud detection systems that analyze transaction data in real time without modifying the original banking software. A manufacturing company can connect machine learning models to older industrial systems to predict equipment failures and optimize maintenance schedules. Healthcare providers can layer AI-driven diagnostic support tools on top of legacy patient record systems without replacing their entire healthcare infrastructure.

Middleware and integration layers play a critical role in this process by acting as bridges between older enterprise systems and newer AI services. APIs allow data exchange between systems, while cloud-based AI platforms can process and analyze enterprise data externally before sending results back into the legacy environment.

In many cases, complete system replacement is unnecessary because the core business logic inside legacy systems still functions reliably. Instead of rebuilding stable operational platforms, businesses can modernize selectively by adding AI-driven intelligence, automation, and analytics where they deliver the highest business value.

Understanding Legacy System Architecture Before AI Integration

Before integrating artificial intelligence into existing enterprise environments, organizations must first understand the structure, limitations, and operational dependencies of their legacy systems. Many AI initiatives fail not because the AI models themselves are ineffective, but because the underlying infrastructure lacks the flexibility, data accessibility, or scalability required to support modern AI workloads. Legacy environments are often deeply interconnected with business operations, meaning even small architectural changes can impact critical workflows, customer-facing services, or internal processes.

A successful AI integration strategy begins with a comprehensive assessment of the current technology ecosystem. This includes understanding the types of legacy systems in use, identifying infrastructure bottlenecks, evaluating data quality, assessing integration readiness, and analyzing technical debt accumulated over years of system modifications and maintenance. Enterprises also need to determine whether their infrastructure can support real-time data processing, cloud integration, API communication, and AI-driven automation without disrupting ongoing operations.

Understanding the architecture before deployment helps organizations prioritize the right AI use cases, reduce modernization risks, estimate implementation costs more accurately, and choose integration approaches that align with long-term digital transformation goals. Rather than approaching AI as a standalone technology upgrade, businesses must evaluate how AI will interact with existing applications, databases, workflows, security frameworks, and operational dependencies already embedded within the enterprise ecosystem.

Common Types of Legacy Systems

Legacy systems exist in many forms depending on the industry, organizational size, and historical technology investments made by the business. One of the most common types is monolithic applications, where all business logic, user interfaces, and database functions are tightly integrated into a single large application. These systems can become difficult to modify because changing one component may affect the entire platform.

Mainframe systems remain heavily used in industries such as banking, insurance, government, and aviation due to their reliability and ability to process massive transaction volumes. Many enterprises also continue operating on-premise enterprise software that was deployed years before cloud computing became mainstream. These systems are often customized extensively to support unique operational requirements.

Desktop-based business systems are another form of legacy infrastructure, especially in manufacturing, logistics, and healthcare environments where standalone operational tools still support day-to-day activities. Older ERP and CRM platforms also qualify as legacy systems when they lack modern APIs, cloud compatibility, or scalable integration capabilities. Despite their age, these systems often continue serving as the operational backbone of enterprise organizations.

Technical Characteristics of Legacy Infrastructure

Legacy infrastructure typically contains several technical limitations that make AI integration more complex compared to modern cloud-native systems. One major challenge is the use of outdated programming languages such as COBOL, Visual Basic, Pascal, or older Java frameworks that are difficult to maintain and lack compatibility with modern AI technologies and development practices.

Another common characteristic is tight coupling between components. In many older systems, business logic, databases, and user interfaces are interconnected so closely that modifying one area can unintentionally affect other critical functions. This architectural rigidity limits flexibility when introducing AI-powered workflows or automation layers.

Legacy systems also frequently suffer from limited API availability. Many older applications were not designed for external integrations, making it difficult for AI platforms to access enterprise data in real time. Poor documentation further complicates modernization efforts, especially when original developers are no longer available or system knowledge exists only within small internal teams.

Siloed databases are another major issue. Enterprise data may be scattered across disconnected systems, spreadsheets, local servers, and departmental applications, making centralized AI processing and analytics significantly harder to implement effectively.

Identifying AI Readiness in Existing Systems

Before deploying AI, organizations must assess whether their current systems are technically and operationally ready to support AI-driven workloads. This process usually begins with a detailed infrastructure audit to identify hardware limitations, application dependencies, network capabilities, and system performance bottlenecks that could impact AI integration.

Data accessibility is one of the most critical evaluation factors. AI systems require access to reliable, consistent, and well-structured enterprise data. Businesses need to determine whether existing systems expose data through APIs, databases, middleware, or export mechanisms that AI platforms can consume efficiently. If data extraction is difficult or fragmented, additional integration layers may be necessary before AI deployment becomes feasible.

Compute capabilities also play a major role in AI readiness. Older servers and on-premise systems may lack the processing power required for machine learning workloads, real-time analytics, or large-scale automation tasks. In such cases, organizations often adopt hybrid cloud architectures where AI processing occurs in external cloud environments while the core legacy system remains operational internally.

Integration feasibility must also be evaluated carefully. Businesses need to understand how AI tools will interact with existing applications, whether real-time synchronization is possible, and how AI outputs will be incorporated into operational workflows without causing downtime or instability.

Evaluating Existing Data Quality and Availability

Data quality is one of the most important factors determining whether AI integration will succeed or fail. Even advanced AI models cannot produce reliable outputs if the underlying enterprise data is incomplete, inconsistent, outdated, or fragmented across disconnected systems. Before implementing AI, organizations must evaluate both the availability and quality of their existing data assets.

Legacy environments often contain a combination of structured and unstructured data. Structured data may exist inside relational databases, ERP systems, spreadsheets, or transactional systems, while unstructured data may include emails, PDFs, scanned documents, customer support conversations, images, or handwritten records. Businesses must determine which data sources are valuable for AI use cases and whether those sources can be accessed efficiently.

Data silos create another major challenge. Different departments may store information independently using incompatible systems, preventing unified AI analysis across the organization. In many enterprises, duplicate records, inconsistent naming conventions, missing fields, and outdated datasets further reduce data reliability.

Data cleanliness also directly affects AI accuracy. Organizations often need to perform extensive data cleaning, normalization, labeling, and validation before AI models can operate effectively. Without strong data preparation processes, businesses risk deploying AI systems that generate inaccurate insights, biased predictions, or unreliable automation outcomes.

Business Process Mapping Before AI Deployment

Before integrating AI into legacy systems, businesses must clearly understand which operational processes are best suited for automation or AI enhancement. This requires detailed business process mapping to identify repetitive workflows, decision-heavy tasks, and high-volume operations that consume significant time and human effort.

Many organizations initially attempt broad AI deployment without clearly defining operational objectives, leading to poor adoption and limited business impact. Instead, enterprises should focus on identifying areas where AI can deliver measurable efficiency improvements or cost reductions. Examples include invoice processing, customer service ticket routing, fraud detection, claims processing, inventory forecasting, and document verification workflows.

Decision-heavy operations are often strong candidates for AI integration because machine learning systems can analyze large volumes of historical data faster than human teams. High-volume manual tasks are also ideal for automation because even small efficiency improvements can create significant operational savings at scale.

Process mapping also helps businesses identify workflow dependencies, approval structures, integration points, and compliance requirements before AI systems are introduced into production environments.

Technical Debt and Its Impact on AI Adoption

Technical debt refers to the long-term operational and architectural issues that accumulate when businesses prioritize short-term fixes over sustainable system design. Most legacy environments contain years of patches, workarounds, outdated integrations, and unsupported components that increase the complexity of AI adoption.

One major impact of technical debt is integration complexity. Older systems often contain undocumented dependencies and custom modifications that make AI integration unpredictable and time-consuming. Even simple API connections or automation workflows may require extensive engineering effort due to outdated architectures.

Scalability limitations are another common issue. Legacy infrastructure may not support real-time AI processing, cloud connectivity, or large-scale data analytics workloads efficiently. As AI adoption expands, older systems can become performance bottlenecks that limit business growth.

Security concerns also increase with technical debt. Unsupported software, outdated authentication systems, and unpatched vulnerabilities create risks when exposing legacy platforms to modern AI services or external integrations. Additionally, maintaining aging infrastructure typically requires higher operational costs and specialized expertise that becomes increasingly difficult to source over time.

Understanding and managing technical debt is therefore essential before organizations attempt large-scale AI modernization initiatives.

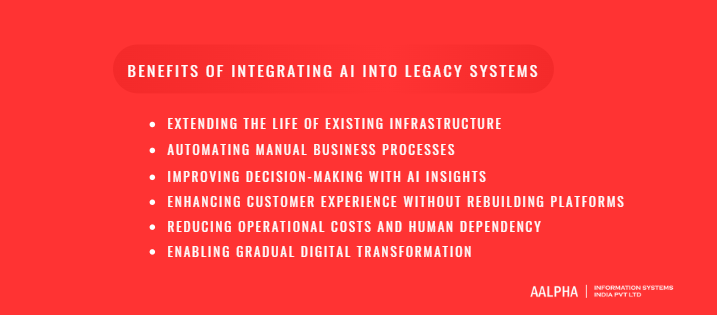

Benefits of Integrating AI into Legacy Systems

Many enterprises assume that adopting artificial intelligence requires replacing their existing infrastructure entirely. In reality, some of the most successful AI modernization initiatives happen within organizations that continue operating older enterprise systems while gradually layering AI capabilities on top of them. This approach allows businesses to modernize strategically without disrupting critical operations, rewriting stable applications, or investing heavily in large-scale infrastructure replacement projects.

Integrating AI into legacy systems offers several operational and financial advantages. Businesses can automate repetitive tasks, improve decision-making, optimize customer experiences, and unlock valuable insights from years of enterprise data already stored inside existing systems. AI also helps organizations improve efficiency while preserving operational continuity, which is especially important for industries where downtime can lead to financial losses, compliance violations, or customer dissatisfaction.

Another major advantage is that AI-driven modernization supports gradual digital transformation rather than forcing enterprises into risky all-or-nothing migration strategies. Companies can modernize selectively by targeting high-impact workflows first while maintaining core operational stability. This incremental approach reduces implementation risks and allows organizations to achieve measurable business value faster.

As AI technologies continue evolving, enterprises that successfully integrate AI into existing infrastructure gain stronger scalability, better operational intelligence, and improved competitiveness without abandoning the systems that already support their day-to-day business activities.

-

Extending the Life of Existing Infrastructure

One of the biggest benefits of integrating AI into legacy systems is the ability to extend the operational lifespan of existing enterprise infrastructure. Many organizations have invested millions of dollars over decades building stable operational systems that continue supporting essential business functions reliably. Completely replacing these systems is often expensive, time-consuming, and operationally risky.

AI integration allows businesses to modernize functionality without rebuilding the entire technology stack from scratch. Instead of replacing core banking systems, manufacturing platforms, healthcare applications, or supply chain software, enterprises can enhance them with AI-powered analytics, automation, and intelligent decision-making capabilities. This reduces capital expenditure while preserving the reliability of systems employees already understand and depend on daily.

Operational continuity is another critical advantage. Full-scale system migrations can interrupt workflows, introduce compatibility issues, and create downtime risks that affect customers and internal operations. AI augmentation enables businesses to improve performance gradually while maintaining ongoing service delivery. This approach is particularly valuable in industries such as finance, healthcare, logistics, and government operations where uninterrupted system availability is essential for daily business continuity.

-

Automating Manual Business Processes

AI integration helps enterprises automate repetitive and labor-intensive business processes that traditionally require significant manual effort. Many legacy systems still depend heavily on human-driven workflows because they were designed before intelligent automation technologies became widely available. By introducing AI capabilities into these environments, businesses can improve efficiency, reduce delays, and eliminate operational bottlenecks.

Invoice processing is one common example. AI-powered document recognition systems can extract invoice data, validate records, identify discrepancies, and automate approvals without requiring manual data entry. Customer support operations also benefit significantly from AI integration through intelligent ticket routing, AI chatbots, automated responses, and sentiment analysis systems connected to existing CRM platforms.

Document classification is another high-value use case. Enterprises managing large volumes of contracts, claims, medical records, compliance documents, or legal paperwork can use AI to categorize, organize, and retrieve information more efficiently. Internal workflows such as employee onboarding, procurement approvals, compliance verification, and operational reporting can also be automated using AI-driven process orchestration.

By reducing repetitive workloads, businesses improve productivity while allowing employees to focus on strategic tasks that require human judgment, creativity, and decision-making capabilities rather than manual administrative work.

-

Improving Decision-Making with AI Insights

Legacy systems often contain years of operational and transactional data, but many organizations struggle to extract actionable insights from that information using traditional reporting tools alone. AI integration helps businesses transform historical enterprise data into intelligent decision-making systems capable of generating predictions, recommendations, and real-time operational insights.

Predictive analytics is one of the most valuable benefits. AI models can analyze historical trends to forecast customer demand, identify potential equipment failures, estimate financial risks, or predict supply chain disruptions before they occur. This allows businesses to make proactive decisions instead of reacting after problems emerge.

Forecasting capabilities also improve significantly with AI-driven analysis. Retail companies can optimize inventory planning, logistics firms can anticipate delivery demand fluctuations, and manufacturers can forecast maintenance requirements more accurately using machine learning algorithms connected to legacy operational systems.

Risk scoring is another major application area. Banks, insurance providers, and healthcare organizations use AI to evaluate transaction risks, insurance claims, fraud patterns, and patient outcomes more effectively. Real-time recommendation engines can also support operational teams by suggesting optimal actions, pricing adjustments, workflow priorities, or resource allocation decisions based on continuously analyzed enterprise data.

These AI-driven insights help organizations improve accuracy, reduce uncertainty, and accelerate strategic decision-making across business operations.

-

Enhancing Customer Experience Without Rebuilding Platforms

Modern customers expect fast, personalized, and intelligent digital experiences regardless of whether the underlying business infrastructure is modern or decades old. AI integration allows enterprises to improve customer engagement and service quality without replacing existing customer-facing systems entirely.

AI-powered chatbots are among the most common enhancements added to legacy customer support environments. Businesses can deploy conversational AI assistants connected to older CRM systems, support databases, and operational platforms to provide faster responses, automate common inquiries, and reduce customer wait times. These chatbots can operate across websites, mobile apps, messaging platforms, and voice support channels while still interacting with legacy backend systems.

Recommendation systems also help improve customer experiences significantly. Retailers, streaming platforms, financial institutions, and eCommerce businesses can use AI models to deliver personalized product suggestions, service recommendations, and targeted offers using customer behavior data already stored within existing enterprise systems.

AI-driven personalization further enhances customer engagement by analyzing preferences, purchase history, and interaction patterns to create more relevant experiences. Importantly, businesses can implement these capabilities incrementally without rebuilding the underlying operational platforms customers already use, reducing modernization costs while still improving digital competitiveness.

-

Reducing Operational Costs and Human Dependency

AI integration helps organizations reduce operational costs by improving efficiency, minimizing manual work, and optimizing resource utilization across business functions. Many legacy systems require extensive human involvement because they lack intelligent automation capabilities, resulting in higher labor costs and slower operational execution.

Labor optimization becomes possible when AI automates repetitive administrative tasks such as data entry, reporting, document handling, customer support routing, and workflow approvals. This reduces dependency on large operational teams while allowing businesses to scale more efficiently without proportional increases in staffing costs.

AI also reduces human error rates significantly. Manual processes often lead to inconsistencies, data inaccuracies, delayed approvals, and compliance risks. Machine learning systems can process large volumes of information more consistently and accurately, improving operational reliability while reducing costly mistakes.

Faster execution is another major advantage. AI-powered automation accelerates workflows that previously required hours or days of manual processing. Real-time analytics, automated approvals, predictive maintenance systems, and intelligent scheduling tools help organizations respond faster to operational changes and customer demands.

Over time, these efficiency improvements contribute to lower operating expenses, higher productivity, and stronger business scalability without requiring complete infrastructure replacement.

-

Enabling Gradual Digital Transformation

One of the most strategic benefits of AI integration is that it enables gradual digital transformation instead of forcing businesses into disruptive large-scale modernization projects. Many enterprises hesitate to replace legacy systems because of the operational risks, implementation complexity, and financial investment involved in full infrastructure migration.

AI allows organizations to modernize incrementally by targeting specific workflows, departments, or operational challenges first. Businesses can begin with smaller AI initiatives such as customer support automation, predictive analytics, or intelligent reporting before expanding AI capabilities across broader enterprise functions. This phased approach reduces implementation risk while generating measurable business value early in the modernization journey.

Hybrid architecture adoption also becomes easier through AI integration. Organizations can maintain core legacy systems internally while connecting them to cloud-based AI services, external analytics platforms, automation engines, and modern APIs. This creates a more flexible technology ecosystem that supports gradual modernization without disrupting critical business operations.

Over time, businesses can continue evolving their infrastructure strategically while preserving operational stability, protecting previous technology investments, and adapting to changing market demands more effectively.

Challenges of AI Integration with Legacy Systems

While integrating AI into legacy systems offers significant operational and strategic benefits, the process is rarely straightforward. Many enterprises operate on infrastructure that was designed long before cloud computing, modern APIs, machine learning platforms, or real-time analytics became standard technology requirements. As a result, organizations often encounter technical, operational, financial, and organizational barriers when attempting to introduce AI into older environments.

Legacy systems are usually deeply embedded into daily business operations, meaning even minor modifications can affect mission-critical workflows. Enterprises must therefore balance innovation with operational stability while ensuring compliance, security, and system reliability throughout the modernization process. In many cases, the biggest challenge is not the AI technology itself but the complexity of connecting modern AI tools with aging enterprise architectures that were never designed for intelligent automation or large-scale data-driven processing.

Data fragmentation, scalability limitations, integration bottlenecks, and technical debt further increase implementation complexity. Businesses also face organizational resistance as employees adapt to AI-assisted workflows and new operational models. Additionally, modernization costs can escalate quickly when infrastructure upgrades, data transformation, security controls, and integration engineering are required simultaneously.

Understanding these challenges early allows organizations to develop realistic implementation strategies, allocate resources more effectively, and reduce the risks associated with enterprise AI modernization initiatives.

-

Lack of APIs and Modern Integration Capabilities

One of the biggest barriers to AI integration in legacy environments is the absence of modern integration capabilities. Many older enterprise systems were developed before API-driven architectures became standard, making it difficult for AI platforms to access operational data or interact with existing applications efficiently.

Some legacy systems rely on older SOAP-based communication protocols instead of modern REST APIs. While SOAP can still support integrations, it is often more rigid, slower to implement, and less compatible with modern cloud-native AI services. In many cases, organizations must build additional middleware layers to translate data formats and enable communication between older systems and newer AI platforms.

Middleware dependency itself introduces added complexity because businesses may need enterprise service buses, integration hubs, or custom orchestration platforms simply to connect AI services to existing infrastructure. When no direct integration method exists, companies often build custom connectors tailored specifically to their legacy applications.

These custom integrations can become difficult to maintain over time, especially when documentation is limited or the original software architecture is poorly understood. As a result, lack of integration readiness significantly increases both implementation effort and long-term operational complexity during AI modernization projects.

-

Poor Data Quality and Fragmented Databases

AI systems depend heavily on accurate, consistent, and accessible data. Unfortunately, many legacy environments contain fragmented, incomplete, or inconsistent datasets that reduce the effectiveness of AI-driven analytics and automation.

Incomplete data is a common issue in older enterprise systems where historical records may contain missing values, outdated information, or manually entered data with varying levels of accuracy. Duplicate records further complicate AI training and analysis because machine learning models may generate misleading insights when the same entity appears multiple times under different formats or identifiers.

Non-standard data formats also create integration challenges. Different departments often use separate databases, spreadsheets, file structures, and operational systems that store information inconsistently. One business unit may use structured relational databases while another relies on scanned PDFs, emails, or manually maintained records.

Data fragmentation across disconnected systems creates silos that prevent AI platforms from obtaining a unified enterprise-wide view. Before AI implementation, organizations often need extensive data cleaning, normalization, mapping, and consolidation processes. Without proper data preparation, AI models may produce inaccurate forecasts, unreliable recommendations, or biased automation outcomes that reduce business trust in AI systems altogether.

-

Security and Compliance Risks

Integrating AI into legacy systems introduces significant security and regulatory challenges, especially in industries handling sensitive customer, financial, or healthcare data. Many older systems were not designed for external integrations or cloud-based AI processing, increasing the risk of data exposure during modernization efforts.

Compliance regulations such as General Data Protection Regulation (GDPR), Health Insurance Portability and Accountability Act (HIPAA), and Payment Card Industry Data Security Standard (PCI-DSS) impose strict requirements on how businesses collect, process, store, and transmit sensitive data. AI systems accessing enterprise records must comply with these regulations while maintaining strong data governance and auditability.

Legacy infrastructure often contains outdated authentication mechanisms, unsupported software components, and unpatched vulnerabilities that increase cybersecurity risks when exposing systems to modern AI services or external APIs. Data exposure concerns become even more serious when organizations integrate cloud-based AI platforms with internally hosted enterprise systems.

Businesses must also address issues related to access control, encryption, AI model security, and third-party vendor compliance. Without strong governance frameworks and cybersecurity measures, AI integration can unintentionally expand the attack surface of already vulnerable legacy environments.

-

Infrastructure Scalability Limitations

Many legacy systems were designed for predictable workloads and stable operational environments rather than the dynamic processing requirements associated with modern AI technologies. As a result, infrastructure scalability becomes a major limitation during AI integration projects.

Older servers often lack the processing power, memory capacity, and storage performance required for machine learning workloads, real-time analytics, or large-scale automation systems. AI applications frequently require GPU acceleration, distributed computing, or cloud-scale processing capabilities that traditional on-premise infrastructure cannot support efficiently.

Limited compute power can lead to slower AI inference times, delayed analytics, and reduced operational responsiveness. This becomes particularly problematic for businesses requiring real-time fraud detection, predictive maintenance, intelligent routing, or customer support automation.

Incompatibility with modern AI workloads is another challenge. Legacy operating systems, outdated databases, and older enterprise applications may not support containerization, orchestration tools, or cloud-native deployment methods commonly used in AI development environments.

To overcome these limitations, many organizations adopt hybrid architectures where AI processing occurs in external cloud environments while legacy systems continue handling core operational tasks internally. However, managing hybrid infrastructure introduces additional integration and operational complexity.

-

Resistance to Change Inside Organizations

Technical challenges are only part of the AI modernization process. Organizational resistance often becomes a major barrier to successful AI adoption within enterprises operating on legacy systems.

Employees may worry that AI-driven automation could replace existing roles or significantly change established workflows. This creates hesitation and skepticism toward modernization initiatives, particularly among teams accustomed to long-standing operational processes and manual decision-making systems.

Adoption friction also occurs when employees are required to learn new tools, AI-assisted workflows, or data-driven operational methods without sufficient training or organizational support. In some cases, staff members may distrust AI-generated recommendations or resist relying on automated systems for business-critical decisions.

Another challenge is the lack of internal AI expertise. Many organizations operating legacy systems do not have dedicated AI engineers, machine learning specialists, or data scientists capable of managing enterprise AI deployments effectively. Existing IT teams may have extensive experience maintaining older infrastructure but limited exposure to modern AI architectures, cloud platforms, or automation frameworks.

Without strong leadership alignment, employee education, and change management strategies, businesses may struggle to achieve organization-wide adoption even if the technical implementation itself succeeds.

-

Integration Complexity and Downtime Risks

Integrating AI into legacy environments is often technically complex because older enterprise systems contain deeply interconnected dependencies that evolved over many years. Even small infrastructure changes can impact multiple operational processes simultaneously.

Business continuity concerns become especially important in industries where downtime directly affects revenue, customer trust, or regulatory compliance. Financial systems, healthcare applications, manufacturing platforms, and logistics networks often operate continuously, leaving little room for service interruptions during AI deployment.

System dependencies further increase integration difficulty. Legacy applications may rely on undocumented workflows, tightly coupled databases, or outdated third-party integrations that make AI connectivity unpredictable. Businesses frequently discover hidden dependencies only after modernization work has already begun.

Migration risks also arise when transferring operational data into AI-enabled workflows or external processing environments. Inaccurate synchronization, failed integrations, or incompatible system behaviors can disrupt daily operations if not managed carefully.

Because of these risks, many organizations adopt phased rollout strategies, sandbox testing environments, and parallel deployment models to minimize operational disruption during AI integration initiatives.

-

High Initial Modernization Costs

Although AI integration can reduce long-term operational costs, the initial modernization investment can still be substantial. Many organizations underestimate the financial resources required to prepare legacy systems for AI deployment successfully.

Infrastructure upgrades are often necessary because older hardware environments may not support modern AI workloads efficiently. Businesses may need to invest in cloud migration, server modernization, network improvements, storage optimization, or hybrid computing architectures before AI systems can operate reliably.

AI engineering costs also contribute significantly to implementation expenses. Enterprises often require specialized expertise in machine learning, data engineering, enterprise integration, cloud infrastructure, and cybersecurity to design scalable AI-enabled architectures. Hiring internal specialists or partnering with experienced AI integration providers increases upfront investment requirements.

Data transformation costs can become particularly expensive. Cleaning, normalizing, labeling, migrating, and consolidating enterprise data across disconnected systems requires extensive engineering effort and ongoing maintenance.

Without proper planning, modernization projects can exceed budgets quickly, especially when hidden technical debt or undocumented infrastructure complexities emerge during implementation.

-

Vendor Lock-In and Technology Compatibility Issues

Vendor lock-in is another major concern when integrating AI into legacy environments. Many older enterprise systems rely on proprietary software, closed architectures, or vendor-specific technologies that limit flexibility and interoperability with modern AI platforms.

Proprietary systems may restrict direct database access, API development, or third-party integrations, forcing organizations to rely heavily on the original software vendor for modernization support. This can increase costs, slow implementation timelines, and reduce architectural flexibility during AI deployment.

Closed architectures also create compatibility issues when businesses attempt to integrate cloud-based AI services, automation tools, or external analytics platforms with older infrastructure. Some legacy applications may not support modern integration standards, containerized deployments, or scalable microservices architectures.

Long-term maintainability becomes another concern. Custom connectors and vendor-specific integration solutions may solve short-term problems but create future operational complexity if businesses later decide to migrate platforms or expand AI capabilities further.

To reduce dependency risks, organizations increasingly prioritize modular architectures, open integration standards, and scalable middleware frameworks when modernizing legacy systems with AI technologies.

Step-by-Step Process to Integrate AI into Legacy Systems

Integrating AI into legacy systems requires far more than simply connecting a machine learning model to an existing application. Enterprise AI modernization involves infrastructure analysis, data preparation, integration planning, security assessment, workflow redesign, and continuous optimization. Organizations that approach AI integration strategically are far more likely to achieve measurable operational improvements while minimizing disruption to existing business processes.

A phased and structured implementation approach is critical because legacy environments often contain tightly connected dependencies, operational risks, and technical limitations that cannot be ignored. Rather than attempting enterprise-wide transformation immediately, businesses should focus on carefully selected use cases, incremental deployment, and scalable integration architectures that align with long-term modernization goals.

Successful AI integration projects typically begin with business alignment and infrastructure assessment before moving into data preparation, technology selection, integration development, testing, deployment, and ongoing monitoring. In many cases, collaboration between internal technical teams and an AI development company helps organizations manage integration complexity while maintaining compatibility with existing enterprise environments. Each phase plays an essential role in ensuring that AI systems deliver reliable outputs and consistent operational performance.

-

Define Business Objectives and AI Use Cases

The first step in integrating AI into legacy systems is defining clear business objectives and identifying practical AI use cases that align with measurable organizational goals. Many AI initiatives fail because companies adopt AI technologies without clearly understanding the operational problems they are trying to solve.

Businesses should begin by identifying pain points inside existing workflows where AI can deliver measurable improvements. These may include slow customer support response times, manual invoice processing, fraud detection inefficiencies, inventory forecasting inaccuracies, equipment downtime, or operational bottlenecks in supply chain management. Instead of pursuing broad AI transformation immediately, organizations should focus on high-impact areas where automation or predictive intelligence can generate visible business value quickly.

AI initiatives must also align with key business performance indicators such as operational efficiency, cost reduction, customer satisfaction, response time improvements, error reduction, or revenue growth. Defining measurable outcomes early helps organizations evaluate implementation success objectively rather than relying on vague innovation goals.

Prioritizing high-impact workflows is equally important because legacy environments often contain hundreds of interconnected operational processes. Businesses should initially target repetitive, data-heavy, and rule-based tasks where AI adoption can be implemented with lower operational risk and faster ROI potential.

Strong objective definition ensures AI modernization efforts remain business-driven rather than purely technology-driven, improving both adoption rates and long-term operational success.

-

Audit Existing Infrastructure and Applications

Before deploying AI, organizations must conduct a comprehensive audit of their existing infrastructure, enterprise applications, databases, and integration dependencies. This assessment helps businesses understand whether their current environment can support AI workloads effectively and identifies the modifications required before implementation begins.

The infrastructure audit typically includes server performance analysis, storage capacity evaluation, network architecture assessment, operating system compatibility reviews, and application dependency mapping. Legacy systems often contain undocumented integrations, outdated software components, and tightly coupled architectures that can complicate AI deployment significantly.

Dependency mapping is particularly important because many enterprise systems interact with multiple applications simultaneously. Businesses need to understand how customer data, transactional workflows, reporting systems, authentication services, and operational processes are interconnected before introducing AI-enabled automation or analytics layers.

Organizations must also identify integration bottlenecks that may limit AI performance. Common challenges include slow database access, limited API support, disconnected systems, batch-processing constraints, and real-time synchronization limitations. In some cases, older applications may require middleware platforms, API gateways, or data extraction layers before AI services can interact with them effectively.

This audit phase allows enterprises to identify technical risks early, estimate infrastructure upgrade requirements more accurately, and select AI integration strategies that align with existing architectural realities rather than theoretical modernization assumptions.

-

Assess and Prepare Enterprise Data

Data preparation is one of the most critical stages of AI integration because AI systems rely entirely on the quality, accessibility, and consistency of enterprise data. Even highly advanced AI models cannot deliver reliable outcomes if the underlying data infrastructure is fragmented or inaccurate.

The process begins with data extraction from legacy systems, databases, spreadsheets, document repositories, ERP platforms, CRM systems, and operational applications. In many organizations, enterprise data is spread across multiple disconnected systems, requiring specialized extraction pipelines and transformation workflows before AI processing becomes possible.

Once extracted, businesses must clean and normalize the data to remove duplicates, correct inconsistencies, standardize formats, and eliminate corrupted or incomplete records. Data quality problems are extremely common in legacy environments because older systems often evolved over decades without centralized governance standards.

Data labeling may also be necessary depending on the AI use case. Machine learning models used for fraud detection, document classification, predictive maintenance, or customer behavior analysis often require labeled training datasets to improve accuracy.

Centralization is another key objective during preparation. Many enterprises establish data lakes, cloud storage platforms, or centralized analytics environments to consolidate operational data from multiple systems into a unified structure accessible by AI services. Without strong data preparation and governance processes, businesses risk deploying AI systems that produce unreliable predictions and inconsistent automation outcomes.

-

Choose the Right AI Integration Approach

Selecting the right integration architecture is essential because different legacy environments require different modernization strategies depending on infrastructure complexity, operational requirements, scalability goals, and system compatibility limitations.

API-based AI integration is one of the most common approaches for enterprises with partially modernized systems. APIs allow legacy applications to exchange data with cloud-based AI services, machine learning platforms, and external analytics engines. This approach works well when systems already support REST APIs or can be extended through API gateways.

Middleware integration is often necessary for older environments lacking modern communication capabilities. Middleware platforms act as intermediaries between legacy applications and AI services, enabling data translation, orchestration, workflow automation, and system interoperability across disconnected infrastructures.

Robotic Process Automation (RPA) combined with AI is particularly effective when direct system integration is difficult or impossible. RPA bots can interact with legacy user interfaces the same way employees do while AI models provide intelligent decision-making capabilities such as document interpretation, natural language understanding, or predictive analysis.

AI microservices architecture is another increasingly popular approach. Instead of embedding AI directly into legacy systems, businesses deploy independent AI modules that communicate externally with operational platforms. This improves scalability and allows organizations to modernize gradually without rewriting entire applications.

Some enterprises also adopt AI overlay architecture, where AI systems operate as intelligent layers above existing infrastructure without deeply modifying core operational systems. This approach minimizes disruption while still enabling advanced automation, analytics, and recommendation capabilities.

The ideal integration strategy depends on the organization’s infrastructure maturity, operational risk tolerance, compliance requirements, and long-term modernization roadmap.

-

Select AI Models, Frameworks, and Platforms

After defining integration architecture, businesses must select the AI technologies, frameworks, and platforms best suited for their operational requirements and technical environments. The choice depends heavily on the intended AI use cases, infrastructure capabilities, scalability needs, and regulatory constraints.

Many enterprises now integrate large language models and generative AI services from companies such as OpenAI and Anthropic for conversational AI, document processing, intelligent search, and enterprise copilots. These platforms provide API-based access that simplifies integration with existing enterprise systems.

For custom machine learning development, frameworks such as TensorFlow and PyTorch are widely used because they support scalable AI model training and deployment across various enterprise workloads.

Cloud providers also offer enterprise AI platforms designed specifically for business integration. Microsoft Azure AI services provide tools for language processing, computer vision, predictive analytics, and enterprise automation. Similarly, Amazon Web Services AI services support scalable machine learning infrastructure and real-time analytics capabilities.

In some industries, organizations may choose custom AI models tailored specifically to operational workflows, compliance requirements, or proprietary datasets. While custom development offers greater flexibility and control, it also increases engineering complexity and long-term maintenance responsibilities.

Selecting the right technology stack requires balancing scalability, integration compatibility, cost, security, governance, and long-term maintainability across the enterprise ecosystem.

-

Build Integration Layers and APIs

Once the AI architecture and platforms are selected, businesses must develop the technical integration layers that enable communication between legacy systems and AI services. This phase involves building data pipelines, APIs, middleware orchestration layers, and synchronization mechanisms capable of supporting reliable enterprise-scale operations.

Data pipelines are essential because AI systems require continuous access to operational data from existing enterprise platforms. These pipelines extract, transform, and route information between databases, business applications, cloud environments, and AI processing engines. In many cases, businesses implement ETL processes to standardize and centralize data before AI analysis occurs.

Middleware platforms often play a central role in orchestration. Middleware enables communication between systems using different protocols, data formats, and architectures while managing workflow coordination and message routing across the enterprise environment.

Event-driven architecture is increasingly used in AI modernization because it allows systems to respond to operational events in real time. For example, an AI fraud detection engine may analyze financial transactions immediately after they occur, or predictive maintenance systems may process sensor alerts the moment equipment abnormalities are detected.

Real-time synchronization is especially important when AI outputs directly affect operational decisions. Businesses must ensure AI-generated recommendations, predictions, or automation actions remain synchronized with live enterprise data to avoid inconsistencies or operational errors.

Strong integration design ensures scalability, stability, and long-term interoperability between legacy infrastructure and AI-driven systems.

-

Test AI Integration in Sandbox Environments

Before deploying AI systems into production environments, organizations must conduct extensive testing inside controlled sandbox environments to minimize operational risks and validate system behavior under real-world conditions.

Functional testing ensures that AI integrations operate correctly across enterprise workflows. Businesses verify whether APIs exchange data properly, automation rules execute accurately, and AI-generated outputs align with expected operational outcomes. Testing should cover both standard operational scenarios and edge cases where unusual system behavior may occur.

Security testing is equally important because AI integrations often expose legacy systems to external services, cloud platforms, and expanded data access pathways. Organizations must validate authentication controls, encryption mechanisms, API security, access permissions, and compliance protections before production deployment begins.

Performance validation focuses on scalability and operational reliability. AI systems must process workloads efficiently without degrading existing application performance or causing delays inside mission-critical workflows. Enterprises often simulate peak transaction volumes, high user activity, and large-scale data processing conditions to evaluate system stability.

Sandbox testing also allows businesses to identify hidden integration issues, synchronization delays, and workflow conflicts before AI systems impact real operational environments.

-

Deploy AI Gradually Across Business Operations

Large-scale enterprise AI deployment should occur gradually rather than through immediate organization-wide rollout. Phased implementation reduces operational disruption while allowing businesses to monitor performance, gather feedback, and optimize workflows incrementally.

Many organizations begin with pilot deployments targeting specific departments or operational processes where AI can generate measurable improvements quickly. Customer support automation, document processing, fraud detection, or predictive analytics often serve as initial deployment areas because they offer lower implementation risk and visible operational value.

Department-by-department deployment enables organizations to validate AI effectiveness in controlled operational environments before scaling further. This approach also improves employee adoption because teams can gradually adapt to AI-assisted workflows instead of facing sudden enterprise-wide process changes.

Risk mitigation remains a major focus during deployment. Businesses typically maintain fallback mechanisms, parallel processing workflows, and manual override capabilities while AI systems stabilize operationally. This ensures continuity if integration issues, inaccurate predictions, or automation failures occur during early deployment stages.

Gradual rollout strategies help enterprises modernize more safely while building internal confidence in AI-driven operations over time.

-

Monitor, Optimize, and Retrain AI Systems

AI integration is not a one-time implementation project. Continuous monitoring, optimization, and retraining are essential to maintaining long-term performance and operational reliability.

One major challenge is model drift, where AI accuracy declines over time because operational patterns, customer behavior, market conditions, or enterprise data structures change. Businesses must continuously evaluate AI outputs to ensure predictions and automation workflows remain accurate and relevant.

Monitoring frameworks help organizations track AI performance metrics such as response accuracy, processing speed, prediction reliability, automation success rates, and system stability. These monitoring systems also identify anomalies, failures, or unusual behaviors that require investigation.

Continuous learning and retraining are often necessary as new enterprise data becomes available. Machine learning models may need periodic updates to improve fraud detection, recommendation quality, forecasting precision, or operational automation accuracy.

Organizations must also review governance policies, compliance controls, security protections, and workflow performance regularly as AI adoption expands across the enterprise. Long-term AI success depends on maintaining adaptable, scalable, and continuously optimized operational ecosystems rather than static deployments.

Best AI Integration Approaches for Legacy Systems

Choosing the right integration architecture is one of the most important decisions during AI modernization. Different legacy environments require different approaches depending on infrastructure age, integration flexibility, operational complexity, compliance requirements, and scalability goals.

Some businesses prioritize fast deployment using cloud-based APIs, while others require middleware orchestration, hybrid cloud infrastructure, or event-driven architectures capable of supporting real-time operational intelligence. The best approach depends on how tightly existing systems are coupled, how accessible enterprise data is, and how much architectural flexibility the organization can support.

Most successful AI modernization initiatives use modular integration strategies that allow businesses to add AI incrementally while minimizing disruption to existing operations.

-

API-Based AI Integration

API-based integration is one of the fastest and most practical methods for connecting AI capabilities to legacy systems. APIs allow enterprise applications to exchange data with external AI services without requiring complete system redesign or major infrastructure replacement.

This approach is especially effective when businesses already have partially modernized systems capable of supporting REST APIs or API gateways. Organizations can connect existing applications to cloud-based AI services for natural language processing, predictive analytics, recommendation engines, or intelligent automation.

Cloud AI providers offer scalable APIs that reduce implementation complexity significantly. Businesses can integrate AI-powered customer support, fraud detection, document analysis, or forecasting tools quickly while maintaining their existing operational infrastructure.

API-based integration also supports incremental modernization because organizations can add AI capabilities gradually across different workflows without rebuilding the entire enterprise architecture simultaneously.

-

Middleware-Based Integration

Middleware-based integration is commonly used when legacy systems lack direct API support or modern communication protocols. Middleware platforms act as intermediaries between older applications and modern AI services, enabling interoperability across disconnected enterprise environments.

Enterprise service bus (ESB) platforms are widely used to manage communication, workflow orchestration, data transformation, and message routing between systems using different architectures or protocols. Integration hubs provide centralized coordination for enterprise-wide AI workflows while reducing direct dependencies between applications.

Middleware solutions are particularly valuable in highly fragmented environments where multiple legacy systems must interact with shared AI services simultaneously. Instead of modifying every application individually, businesses can centralize integration logic through middleware infrastructure.

Although middleware introduces additional architectural layers, it often provides greater flexibility, scalability, and long-term maintainability during complex enterprise AI modernization projects.

-

AI Microservices Architecture

AI microservices architecture separates AI functionality into independent modular services that operate externally from the core legacy infrastructure. Instead of embedding AI directly into older enterprise applications, businesses deploy standalone AI modules responsible for specific functions such as recommendations, analytics, fraud detection, or automation.

This modular design improves scalability because individual AI services can scale independently based on workload requirements. Organizations can also update, retrain, or replace specific AI components without affecting the entire enterprise system.

Microservices architectures work particularly well in hybrid modernization strategies where businesses gradually transition toward cloud-native operational models while still maintaining legacy backend systems internally.

Another advantage is deployment flexibility. AI microservices can operate across cloud platforms, containers, or distributed environments while continuing to communicate with older enterprise systems through APIs or middleware layers.

This approach reduces modernization risk and allows enterprises to expand AI capabilities incrementally over time.

-

Robotic Process Automation (RPA) with AI

Robotic Process Automation combined with AI is highly effective for organizations operating legacy systems with limited integration capabilities. RPA bots interact directly with existing software interfaces the same way human employees do, eliminating the need for deep system modifications.

Traditional RPA automates repetitive rule-based tasks such as data entry, report generation, and workflow navigation. When combined with AI, these systems gain intelligent capabilities including document understanding, language processing, predictive analysis, and decision support.

AI-assisted workflows can process invoices, classify documents, validate forms, route customer requests, and automate operational decisions while interacting with older applications through the user interface itself.

This approach is particularly valuable when direct database access or API integration is impractical. Businesses can modernize operational workflows quickly while preserving legacy applications unchanged.

-

Hybrid Cloud AI Architecture

Hybrid cloud architecture combines on-premise legacy infrastructure with cloud-based AI processing environments. This model allows businesses to preserve core operational systems internally while leveraging the scalability and computational power of modern cloud AI platforms.

Sensitive enterprise data or mission-critical workflows can remain within internal infrastructure for compliance and security purposes, while AI processing, analytics, and automation workloads execute externally in scalable cloud environments.

This architecture provides a balance between security and scalability. Organizations gain access to advanced machine learning infrastructure, GPU acceleration, and cloud AI services without fully migrating existing systems to the cloud.

Hybrid models are especially common in finance, healthcare, government, and manufacturing industries where regulatory constraints and operational reliability remain major priorities.

-

Event-Driven AI Systems

Event-driven architecture enables AI systems to respond to operational events in real time as business activities occur. Instead of relying solely on scheduled batch processing, event-driven systems process data streams immediately when transactions, alerts, or operational triggers are generated.

Messaging queues and streaming platforms such as Apache Kafka are commonly used to support high-volume real-time AI processing environments. These systems allow AI services to analyze events continuously across enterprise operations.

For example, fraud detection engines can evaluate financial transactions instantly, predictive maintenance systems can respond to equipment sensor alerts immediately, and logistics platforms can optimize routing dynamically based on live operational conditions.

Event-driven architectures improve responsiveness, scalability, and operational intelligence, making them highly valuable for enterprises requiring real-time AI decision-making capabilities integrated with existing systems.

Real-World AI Use Cases in Legacy System Modernization

AI integration is no longer limited to experimental innovation labs or newly built digital platforms. Enterprises across traditional industries are successfully modernizing legacy systems by embedding AI-driven automation, analytics, and intelligent decision-making capabilities into existing operational infrastructure. Instead of replacing mission-critical systems entirely, organizations are using AI to extend functionality, improve operational efficiency, and unlock value from historical enterprise data already stored within legacy environments.

The most successful AI modernization projects focus on solving practical operational challenges such as fraud prevention, predictive maintenance, workflow automation, customer service optimization, inventory management, and demand forecasting. By connecting AI services to older ERP systems, mainframes, databases, CRM platforms, and industrial applications, businesses can improve performance without disrupting core operational continuity.

Different industries apply AI modernization differently depending on their operational requirements, compliance obligations, and infrastructure maturity. Banking institutions focus heavily on fraud detection and risk analysis, healthcare providers use AI to automate clinical workflows, manufacturers improve equipment reliability through predictive maintenance, and logistics companies optimize delivery operations using AI-powered forecasting systems.

These real-world implementations demonstrate that legacy systems are not barriers to AI adoption. In many cases, older enterprise environments already contain the historical operational data necessary to train highly effective AI systems capable of driving measurable business improvements.

-

Banking and Financial Services

The banking and financial services industry remains one of the largest adopters of AI-driven legacy modernization because many financial institutions still operate on decades-old core banking systems that process millions of daily transactions. Legacy financial system migration has become a key focus area as organizations modernize critical infrastructure while minimizing operational disruption. Rather than replacing these highly stable systems entirely, banks are layering AI capabilities on top of existing platforms to improve operational intelligence, automation, and risk management.

Fraud detection is one of the most common AI use cases in banking modernization. Machine learning models analyze transaction behavior in real time to identify suspicious patterns, unusual spending activity, account takeover attempts, and payment anomalies. These AI systems operate alongside legacy transaction processing platforms without disrupting core banking operations.

Credit scoring and lending automation have also improved significantly through AI integration. Banks use AI models to analyze customer financial history, transaction behavior, repayment patterns, and alternative data sources to assess creditworthiness more accurately and faster than traditional manual underwriting methods.

Many financial institutions are also modernizing legacy core banking platforms with AI-powered customer support, intelligent search systems, predictive financial recommendations, and automated compliance monitoring. This allows banks to improve customer experiences and operational efficiency while preserving the reliability and regulatory stability of existing banking infrastructure.

-

Healthcare Systems

Healthcare organizations continue relying heavily on legacy infrastructure because medical systems often contain years of patient records, clinical workflows, and regulatory integrations that are difficult to replace safely. Healthcare legacy system modernization is increasingly becoming a priority as providers seek to improve operational efficiency, interoperability, and patient experience without disrupting existing systems. AI integration allows healthcare organizations to modernize operations while maintaining continuity of care and compliance with strict healthcare regulations.

Medical record analysis is one of the most valuable AI applications in healthcare modernization. Natural language processing systems can analyze physician notes, patient histories, diagnostic reports, and clinical documentation stored inside older electronic medical record systems. This helps healthcare providers retrieve information faster and improve clinical decision-making efficiency.

Clinical workflow automation is another major use case. Hospitals and clinics use AI to automate appointment scheduling, patient triage, medical coding, claims processing, prescription management, and administrative documentation tasks connected to legacy healthcare applications.

AI-assisted diagnostics are also becoming increasingly common. Machine learning systems analyze medical images, laboratory results, and patient data to assist healthcare professionals in identifying potential abnormalities or treatment recommendations. These AI systems typically operate as support layers connected to existing hospital infrastructure rather than replacing core clinical systems entirely.

By integrating AI gradually, healthcare organizations improve efficiency, reduce administrative burdens, and support better patient outcomes while maintaining operational stability and compliance requirements.

-

Manufacturing and Industrial Systems

Manufacturing companies often operate highly specialized legacy systems controlling production lines, industrial equipment, warehouse operations, and supply chain workflows. Many of these environments were not originally designed for real-time analytics or predictive intelligence, making AI integration highly valuable for operational modernization.

Predictive maintenance is one of the most widely adopted AI use cases in industrial environments. AI systems analyze sensor data, equipment performance metrics, vibration patterns, temperature readings, and historical maintenance records to predict machinery failures before breakdowns occur. This helps manufacturers reduce downtime, improve equipment lifespan, and optimize maintenance schedules.

Quality inspection processes have also improved significantly through AI modernization. Computer vision systems integrated with production environments can detect manufacturing defects, assembly inconsistencies, packaging errors, and product quality issues faster and more accurately than manual inspection methods.

Supply chain forecasting is another important area where AI enhances older manufacturing infrastructure. Machine learning models analyze production data, supplier performance, inventory levels, transportation trends, and market demand to improve procurement planning and reduce supply chain disruptions.

These AI capabilities allow manufacturers to modernize operational intelligence while continuing to use existing industrial infrastructure and production management systems.

-

Retail and eCommerce Platforms

Retailers and eCommerce businesses frequently modernize legacy systems with AI to improve personalization, inventory management, customer analytics, and operational forecasting without rebuilding their entire commerce infrastructure.

Recommendation engines are among the most visible AI integrations in retail modernization. AI systems analyze customer browsing behavior, purchase history, product interactions, and shopping preferences to generate personalized product recommendations connected to existing eCommerce platforms and CRM systems.

Inventory forecasting is another major use case. Retailers use machine learning models to predict product demand, seasonal trends, replenishment requirements, and stock movement patterns using historical sales data stored inside legacy ERP and inventory management systems. This helps reduce overstocking, minimize shortages, and improve operational planning.

Customer analytics platforms powered by AI provide retailers with deeper insights into customer behavior, purchasing trends, retention patterns, and engagement performance. Businesses can segment audiences more effectively, optimize marketing campaigns, and improve pricing strategies using AI-driven analysis layered on top of older commerce systems.

Importantly, these AI capabilities often operate independently from the core retail platform itself, allowing businesses to modernize incrementally while preserving existing operational infrastructure and transactional stability.

-

Logistics and Transportation

Logistics and transportation companies generate massive amounts of operational data across routing systems, fleet management platforms, warehouse operations, and delivery networks. Many organizations continue operating legacy logistics infrastructure while integrating AI to improve efficiency, forecasting, and operational automation.

Route optimization is one of the most impactful AI use cases in logistics modernization. AI systems analyze traffic conditions, weather patterns, fuel consumption, delivery schedules, driver availability, and geographic constraints to optimize transportation routes dynamically in real time. This improves delivery efficiency while reducing operational costs.

Demand forecasting is another major application area. Logistics companies use AI to predict shipping volumes, warehouse capacity requirements, seasonal demand fluctuations, and regional delivery trends based on historical operational data stored inside older enterprise systems.