The rise of Large Language Models (LLMs) has marked a turning point in software development, particularly for SaaS applications. LLMs such as OpenAI’s GPT-4, Anthropic’s Claude, Meta’s LLaMA, and Mistral are no longer experimental tools; they are rapidly becoming foundational infrastructure for modern digital services. Unlike traditional machine learning models, which require heavy feature engineering and domain-specific tailoring, LLMs are pre-trained on a vast corpora of data and can generalize across tasks with minimal adaptation. This versatility makes them uniquely suited to SaaS environments, where agility, scalability, and user experience are the primary drivers of value.

What are LLMs and Why They Matter for SaaS

At their core, LLMs are deep learning models designed to understand and generate human-like language. They excel at natural language processing tasks such as text summarization, classification, translation, information retrieval, and reasoning. Unlike older rule-based NLP systems, LLMs are context-aware, capable of handling nuanced inputs, and can produce dynamic responses tailored to different scenarios.

For SaaS providers, this matters because language is the dominant interface between software and users. From customer support chats to internal workflows and documentation, most interactions rely on text. Embedding an LLM into SaaS transforms these touchpoints into intelligent, adaptive experiences. For example, an LLM can power self-service knowledge bases, generate personalized onboarding guides, or provide real-time insights from complex datasets. By doing so, SaaS companies can reduce customer support costs, increase retention, and differentiate themselves in highly competitive markets.

Current Market Trends: AI-First SaaS and LLM Adoption

The SaaS industry has already begun shifting toward an “AI-first” approach, where machine intelligence is not a feature but a core design principle. Several high-profile SaaS platforms have set the precedent:

- Notion AI integrates generative writing assistance directly into the productivity suite, reducing friction in documentation workflows.

- Jasper delivers copywriting-as-a-service, built almost entirely on GPT models, demonstrating how an LLM can form the backbone of a SaaS business model.

- Intercom leverages AI chatbots and copilots to reduce first-line support volumes while improving customer experience.

- GitHub Copilot has effectively redefined SaaS in the developer tools sector, embedding contextual AI assistance into daily workflows.

This trend is not limited to well-funded Silicon Valley companies. Mid-tier and niche SaaS providers are increasingly adopting open-source LLMs like LLaMA and Mistral to reduce costs, maintain data sovereignty, and enable fine-tuning for domain-specific tasks. According to reports from McKinsey and Gartner, AI-enhanced SaaS is projected to capture the majority of new enterprise software spending over the next five years. In other words, companies that fail to integrate LLMs risk obsolescence as customers demand smarter, more adaptive solutions.

The global large language models (LLMs) market was valued at USD 5.6 billion in 2024 and is expected to grow to USD 35.4 billion by 2030, expanding at a CAGR of 36.9% between 2025 and 2030.

Key Challenges Businesses Face in Integration

Despite the promise, integrating LLMs into SaaS platforms is far from straightforward. Businesses often encounter several recurring challenges:

- Cost and Infrastructure: Running state-of-the-art LLMs at scale requires significant computational resources. While APIs lower the entry barrier, usage fees can quickly balloon with high transaction volumes.

- Latency and User Experience: SaaS applications live or die by responsiveness. If an LLM takes several seconds to generate a response, it can erode user trust. Optimizing inference times while preserving accuracy is a major engineering hurdle.

- Hallucinations and Reliability: LLMs sometimes produce factually incorrect or misleading outputs, a phenomenon known as hallucination. For SaaS platforms in regulated sectors like healthcare or finance, this risk is unacceptable without robust guardrails.

- Compliance and Security: SaaS providers operating globally must comply with GDPR, HIPAA, SOC 2, and other regulations. Feeding sensitive customer data into third-party APIs creates compliance risks unless data handling pipelines are carefully architected.

- Integration Complexity: LLMs are not plug-and-play components. They require orchestration with existing SaaS workflows, databases, and APIs, which can increase development overhead and demand new skill sets within teams.

These challenges explain why many companies experiment with pilots or partial LLM integrations before scaling. Understanding these obstacles upfront is crucial for developing realistic roadmaps and budgets.

This article provides a complete guide for SaaS leaders, CTOs, and product managers who want to leverage LLMs effectively. We will start by unpacking what LLMs mean in the SaaS context, followed by a detailed analysis of high-value use cases across industries. Then we will examine the technical foundations required for LLM integration, from architecture and vector databases to compliance frameworks. The heart of the article will be a step-by-step methodology showing how to move from problem definition to production-grade deployment.

We will also explore the risks and limitations of LLMs, highlight best practices for responsible and cost-effective integration, and look at future trends such as multi-modal SaaS and agentic architectures. Finally, an FAQ section will address common business and technical questions, ensuring readers leave with both strategic insights and actionable knowledge.

By the end, you will have a clear understanding of how LLMs can transform your SaaS platform, what it takes to implement them successfully, and how to position your business for the AI-first future of software.

2. Understanding LLMs in the Context of SaaS

Large Language Models (LLMs) are not just a technological upgrade to existing NLP systems; they represent a paradigm shift in how software can interact with users, process information, and generate insights. For SaaS providers, where speed of deployment, user experience, and scalability define market competitiveness, understanding LLMs is fundamental to shaping both product strategy and architecture.

What is a Large Language Model?

A Large Language Model is a type of artificial intelligence built using deep learning architectures, primarily the transformer model introduced by Vaswani et al. in 2017. Unlike earlier generations of machine learning models that required manual feature engineering, transformers learn contextual relationships across massive datasets, enabling them to capture the nuances of human language. The defining characteristic of LLMs is their scale: billions or even trillions of parameters trained on diverse corpora spanning books, articles, code, and conversational data.

Evolution from NLP to LLMs

The history of language models can be divided into three phases:

- Rule-Based and Statistical NLP (Pre-2015): Early systems like ELIZA or statistical machine translation relied on handcrafted rules and n-gram probabilities. They were brittle, domain-specific, and unable to handle ambiguity in natural language.

- Deep Learning NLP (2015–2018): With the rise of recurrent neural networks (RNNs) and later convolutional architectures, models like Word2Vec and GloVe captured semantic relationships between words. However, they struggled with long-term dependencies and contextual reasoning.

- The Transformer Era (2018–present): The launch of BERT, GPT, and their successors revolutionized NLP. Transformers use attention mechanisms to process entire sequences simultaneously, allowing for context-aware understanding and generation. This gave rise to the modern LLMs such as GPT-4, Claude, and LLaMA that can perform a wide range of tasks without task-specific training.

This evolution matters for SaaS because the capabilities of modern LLMs far surpass those of legacy NLP systems. SaaS providers are no longer limited to predefined workflows or keyword-based search; they can now deliver dynamic, adaptive, and conversational experiences.

Capabilities of LLMs

Modern LLMs bring a wide array of capabilities that make them particularly valuable in SaaS environments:

- Text Generation: The ability to generate coherent, human-like text enables applications such as automated content creation, email drafting, and knowledge base updates.

- Summarization: LLMs can distill lengthy documents or conversations into concise summaries, a feature essential for SaaS platforms in productivity, legal, or healthcare domains.

- Classification: By analyzing context, LLMs can categorize emails, support tickets, or documents into meaningful groups, improving workflow efficiency.

- Reasoning and Problem-Solving: Advanced models can perform multi-step reasoning, answer domain-specific questions, or suggest solutions, pushing SaaS from reactive to proactive service delivery.

- Code Understanding and Generation: For developer-focused SaaS, LLMs can assist with debugging, code completion, and automated documentation.

These capabilities transform SaaS from being purely functional software into intelligent systems capable of adapting to users in real time.

SaaS and AI Convergence

The Software-as-a-Service model is uniquely positioned to benefit from LLM integration. SaaS applications are inherently cloud-native, distributed, and scalable—characteristics that align seamlessly with the requirements of deploying large AI models.

Why SaaS is the Perfect Ecosystem for LLM Integration

- Cloud-Native Architecture: SaaS platforms already rely on scalable cloud infrastructure. This makes it easier to integrate LLM APIs or deploy self-hosted models within existing environments.

- Multi-Tenancy: SaaS providers serve multiple clients simultaneously. LLMs, with their ability to generalize across tasks, can be deployed once and benefit all tenants with minimal customization.

- Data Availability: SaaS systems collect vast amounts of structured and unstructured data (tickets, documents, chat logs). This creates fertile ground for training, fine-tuning, or enhancing LLMs.

- Subscription Economics: SaaS customers are used to paying for premium features. LLM-powered functionality—such as AI assistants or advanced analytics—can be packaged as higher-value subscription tiers, creating new revenue streams.

- Rapid Feature Deployment: The SaaS delivery model allows providers to roll out LLM-driven features quickly, gather feedback, and iterate without requiring users to manage updates.

In other words, SaaS platforms already embody the operational flexibility and business model required to extract value from LLM technology.

Examples of LLM Integration in SaaS

Several high-profile SaaS companies have demonstrated how embedding LLMs can fundamentally enhance user experience:

- Notion AI: Enhances productivity by providing writing suggestions, generating outlines, and summarizing documents directly within the workspace. This transforms Notion from a static note-taking app into an AI-powered knowledge partner.

- Jasper: A SaaS platform entirely built around LLMs, Jasper specializes in AI-powered copywriting. It demonstrates how an LLM can not only improve workflows but also serve as the entire value proposition of a SaaS company.

- Intercom: Uses LLMs to augment its customer support SaaS, automating responses, classifying tickets, and empowering human agents with suggested replies, significantly reducing response times.

- GitHub Copilot: Although often associated with developer tools, Copilot is effectively a SaaS offering powered by LLMs. By embedding code-generation capabilities into development environments, it shows how LLMs can seamlessly integrate into daily workflows.

These examples illustrate the spectrum of adoption: from SaaS platforms where LLMs are a supporting feature to those where LLMs are the product itself.

Key Benefits of LLM Integration for SaaS

While the technical and financial challenges of integration are real, the benefits of embedding LLMs into SaaS are compelling enough to make them a strategic imperative for many providers.

-

Improved User Experience (UX)

LLMs enable conversational interfaces, personalized guidance, and context-aware responses, significantly enhancing UX. Instead of rigid menus or forms, users interact naturally with SaaS applications, reducing friction. For instance, an LLM embedded in a project management SaaS can automatically draft task descriptions, suggest deadlines, or generate meeting summaries, streamlining collaboration.

-

Personalization at Scale

Personalization has long been a SaaS differentiator, but traditional approaches rely on rule-based recommendation engines. LLMs elevate personalization by analyzing user interactions in real time and adapting responses accordingly. In an EdTech SaaS, for example, an LLM can tailor learning materials to a student’s progress, while in a CRM SaaS, it can recommend personalized outreach strategies for sales representatives.

-

Automation of Repetitive Workflows

LLMs reduce the burden of repetitive tasks by automating processes like summarizing support tickets, generating compliance reports, or drafting internal communications. This frees up human talent for higher-value activities and accelerates the pace of operations.

-

Cost Efficiency vs. Traditional Development

Historically, achieving sophisticated natural language features required large in-house NLP teams, custom datasets, and years of model training. LLMs invert this equation: companies can now integrate advanced capabilities almost instantly through APIs or open-source models, cutting both time-to-market and upfront costs. While ongoing usage fees and infrastructure costs remain a concern, they are often outweighed by the reduction in development overhead and the new revenue opportunities created.

-

Competitive Differentiation

In crowded SaaS markets, differentiation is difficult. By embedding LLMs, providers can stand out with features that competitors may lack—whether it’s real-time document summarization, proactive customer support, or intelligent search. As customer expectations evolve toward AI-enhanced interactions, having LLM-driven features is rapidly shifting from optional to essential.

The convergence of LLMs and SaaS is not incidental; it is structural. SaaS platforms provide the perfect infrastructure, economic model, and user base to take advantage of LLM capabilities. From improving user experiences to enabling entirely new business models, LLM integration is reshaping what SaaS can deliver. Understanding the foundations of LLM technology, the nature of SaaS, and the benefits they unlock is the first step toward successful adoption.

3. Identifying Use Cases for LLMs in SaaS

The versatility of Large Language Models lies in their ability to generalize across multiple tasks with minimal reconfiguration. For SaaS providers, this flexibility translates into a wide array of practical applications that can enhance productivity, reduce costs, and create competitive differentiation. While the exact use cases vary by industry, several categories have emerged as the most impactful in real-world deployments.

-

Customer Support Automation

One of the most immediate and popular applications of LLMs in SaaS is customer support automation. Traditional chatbots often rely on predefined decision trees, limiting their ability to handle nuanced questions or unexpected user input. LLM-powered systems, by contrast, offer dynamic, context-aware conversations that mimic human support agents.

- AI Chatbots: LLMs can power conversational interfaces capable of handling a majority of Tier 1 support inquiries, from password resets to product troubleshooting. By integrating into SaaS help desks, these bots reduce support team workload and allow human agents to focus on complex issues. For example, Intercom’s Fin AI has demonstrated how generative models can drastically improve customer response times.

- Ticket Triage: Beyond answering queries directly, LLMs can analyze incoming support requests, classify them by urgency or topic, and route them to the appropriate teams. This intelligent triage minimizes backlog and ensures that critical issues receive timely attention.

- Knowledge Base Augmentation: Instead of users needing to search through static FAQs, LLMs can surface the most relevant information instantly. A SaaS platform can combine an internal knowledge base with an LLM to provide conversational, context-aware answers, increasing customer self-service adoption rates.

For SaaS businesses, effective customer support is directly linked to retention. By reducing churn and support costs, LLM-powered automation directly impacts the bottom line.

-

Intelligent Search and Retrieval

Search is another area where SaaS applications often struggle. Traditional keyword-based search functions rely on exact matches and indexing, which fail to capture the semantic meaning of queries. This creates friction for users who expect intuitive, natural-language interactions.

- Semantic Search vs. Keyword Search: LLMs transform search by moving beyond literal keyword matching. Instead, they interpret user intent and context. For example, in a project management SaaS, a user searching for “budget summary for Q2 marketing” could receive a synthesized answer even if no document uses those exact terms.

- Retrieval-Augmented Generation (RAG): RAG architectures combine an LLM with a vector database. The database retrieves relevant context from the SaaS platform’s knowledge stores (tickets, contracts, meeting notes), which the LLM then uses to generate precise answers. This reduces hallucinations and ensures factual grounding.

Inside SaaS platforms, RAG is particularly powerful. An HR SaaS could use it to answer “What is our parental leave policy in Germany?” by retrieving the exact policy documents. Similarly, a legal SaaS could summarize precedent cases based on stored documents.

Intelligent retrieval not only improves user satisfaction but also turns SaaS platforms into trusted single sources of truth, reducing time wasted in manual searches.

-

Workflow Automation

Workflows are at the heart of most SaaS platforms. From reporting to task management, many repetitive processes can be augmented—or fully automated—through LLM integration.

- Report Generation: SaaS products in analytics or business intelligence can leverage LLMs to generate narrative reports automatically from raw data. For instance, a marketing SaaS might produce weekly campaign performance summaries in plain language, saving managers hours of manual work.

- Document Drafting: SaaS applications that involve drafting proposals, contracts, or technical documents can embed LLMs to auto-generate initial drafts. Users can then refine these drafts, turning a multi-hour process into minutes.

- Scheduling Assistance: For SaaS platforms handling calendars or project planning, LLMs can propose optimal schedules based on team availability, task priorities, or external constraints. They can even interact with users conversationally to negotiate meeting times or project deadlines.

By embedding LLMs at key workflow touchpoints, SaaS companies can dramatically increase productivity for end users while offering premium automation features that justify higher subscription tiers.

-

Personalization and Recommendations

Modern SaaS users expect tailored experiences, and personalization has long been a critical differentiator. LLMs enhance personalization by interpreting user behavior in real time and adapting outputs accordingly.

- AI-Powered Onboarding: LLMs can guide new users through personalized onboarding journeys. Instead of generic tutorials, users receive contextual explanations and proactive suggestions. A SaaS in the HR space, for example, could use an LLM to tailor the onboarding experience for recruiters vs. payroll managers.

- Contextual Product Recommendations: In SaaS platforms with large feature sets, LLMs can analyze how a user interacts with the product and suggest underutilized features. For instance, in a CRM SaaS, an LLM could recommend pipeline automation to a sales team that repeatedly performs manual lead assignments.

- Adaptive Content and Communication: In EdTech SaaS, learning paths can be adjusted dynamically based on a student’s progress. In marketing SaaS, LLMs can generate personalized campaign recommendations for each client based on their industry and past performance.

This kind of personalization creates stickier SaaS products. Users feel as though the platform understands their unique needs, which strengthens adoption and reduces churn.

-

Domain-Specific SaaS Examples

While the above use cases apply broadly, the most compelling value often emerges in domain-specific SaaS applications. Different industries face unique challenges where LLMs can deliver outsized benefits.

- Healthcare SaaS

In healthcare, compliance and patient safety are paramount. LLMs can serve as HIPAA-compliant virtual assistants, helping clinicians summarize patient notes, draft discharge instructions, or retrieve relevant guidelines without exposing sensitive data to third-party APIs. For patient-facing SaaS, LLMs can power chatbots that explain conditions or treatment plans in plain language, improving health literacy and patient engagement.

- Fintech SaaS

In financial technology, precision and compliance are critical. LLMs can automate compliance reporting, generating structured reports from raw transactional data. They can also assist in fraud detection support, where they analyze user conversations or transaction notes to identify suspicious patterns. SaaS companies in fintech must implement strong guardrails, but when done properly, LLMs can improve both efficiency and regulatory adherence.

- EdTech SaaS

In education, personalization is the holy grail. LLMs can function as AI tutors, providing one-on-one support at scale. They can answer student questions, adapt teaching material to learning pace, and generate practice quizzes on demand. Additionally, LLMs can help instructors by automating assessment feedback, turning raw test scores into personalized recommendations for students. This significantly enhances the value of EdTech SaaS while reducing teacher workload.

The use cases outlined above demonstrate that LLM integration is not theoretical—it is already happening across SaaS categories and industries. From customer support and intelligent search to personalized recommendations and industry-specific applications, LLMs are reshaping how SaaS platforms deliver value. For providers, the challenge is not whether to adopt LLMs, but how to prioritize the use cases that align most closely with customer pain points and business strategy.

4. Technical Foundations of LLM Integration

Integrating Large Language Models (LLMs) into SaaS applications is not only a matter of identifying the right use case—it requires thoughtful technical planning. Decisions around model selection, deployment, architecture, and data handling can significantly influence performance, cost, and compliance outcomes. For SaaS companies, which operate at scale and serve diverse customer bases, these foundations are critical for sustainable AI adoption.

Choosing the Right LLM

The first step is selecting which model or family of models to use. The choice between proprietary and open-source models has long-term implications for cost, flexibility, and compliance. For SaaS providers, working with an experienced AI development company can help evaluate these trade-offs and align model selection with business goals.

Proprietary LLMs

Proprietary models, such as OpenAI’s GPT-4, Anthropic’s Claude, and Google’s Gemini, offer high accuracy, advanced reasoning capabilities, and robust support ecosystems. They are delivered via APIs, which allow SaaS providers to integrate cutting-edge AI quickly without managing infrastructure.

- Advantages:

- Best-in-class performance on benchmarks such as MMLU, BigBench, and HumanEval.

- Regular updates and improvements handled by the provider.

- Easy integration via REST or gRPC APIs.

- Best-in-class performance on benchmarks such as MMLU, BigBench, and HumanEval.

- Drawbacks:

- Ongoing costs scale with usage (e.g., per-token billing), which can become unpredictable.

- Limited transparency into training data and fine-tuning options.

- Data compliance concerns, since sensitive inputs are transmitted to third-party servers.

- Ongoing costs scale with usage (e.g., per-token billing), which can become unpredictable.

Open-Source LLMs

Open-source alternatives such as Meta’s LLaMA 2, Mistral, Falcon, and RedPajama have gained significant traction. They can be self-hosted on cloud infrastructure or fine-tuned for domain-specific tasks.

- Advantages:

- Greater control over data pipelines and compliance, since everything can run in-house.

- Ability to fine-tune models on proprietary datasets for improved domain performance.

- Potentially lower long-term costs for high-volume applications.

- Greater control over data pipelines and compliance, since everything can run in-house.

- Drawbacks:

- Require engineering expertise to deploy and scale.

- Higher upfront infrastructure costs.

- Performance may lag behind proprietary models without significant fine-tuning.

- Require engineering expertise to deploy and scale.

Key Considerations

When choosing an LLM, SaaS providers should weigh:

- Latency: API-based proprietary models may introduce higher round-trip delays compared to locally hosted instances.

- Cost: API billing works well for low- to medium-volume usage, while self-hosting may be cheaper for high-volume workloads.

- Compliance: Self-hosted models give greater control over sensitive data, which is vital for SaaS in regulated industries.

- Customization: Open-source models enable fine-tuning for niche use cases, whereas proprietary models typically restrict custom training.

LLM Deployment Options

Once the model is chosen, SaaS providers must decide how to deploy it. Two primary approaches dominate: API-based integration and self-hosted deployment.

API-Based Integration

This is the simplest option. Providers like OpenAI, Anthropic, and Cohere expose LLMs through APIs that can be integrated directly into SaaS applications.

- Strengths:

- Minimal infrastructure overhead.

- Rapid time-to-market.

- Continuous improvements handled by the vendor.

- Minimal infrastructure overhead.

- Weaknesses:

- Dependency on external uptime and rate limits.

- Data privacy concerns if sensitive information must be shared.

- Pricing risk as usage scales.

- Dependency on external uptime and rate limits.

For SaaS companies looking to experiment or roll out AI features quickly, API-based integration is often the best starting point.

Self-Hosted and Fine-Tuned LLMs

For companies prioritizing compliance, cost control, or customization, self-hosting is an attractive alternative. This approach involves deploying open-source models on cloud providers (AWS, Azure, GCP) or even on dedicated hardware.

- Strengths:

- Full control over data residency and security.

- Ability to fine-tune models on proprietary datasets.

- Cost savings at scale, especially when inference can be optimized with quantization or distillation.

- Full control over data residency and security.

- Weaknesses:

- Requires significant machine learning and DevOps expertise.

- Slower time-to-market compared to API solutions.

- Ongoing maintenance responsibility for scaling, updating, and securing models.

- Requires significant machine learning and DevOps expertise.

Increasingly, hybrid approaches are emerging where SaaS platforms use proprietary APIs for general-purpose tasks and open-source self-hosted models for compliance-sensitive workflows.

Architecting the SaaS + LLM Stack

A well-architected stack is essential for integrating LLMs efficiently into SaaS. Unlike traditional SaaS features, LLM-powered workflows often require additional layers of orchestration, retrieval, and monitoring.

Backend Pipelines, APIs, and Microservices

Modern SaaS platforms typically use a microservices architecture. LLMs can be deployed as independent services within this ecosystem, exposing APIs that other services can call. For example, a “text generation service” could handle summarization requests, while a “classification service” handles ticket triage.

Key design considerations include:

- Load balancing: Ensuring LLM services remain responsive under varying workloads.

- Caching: Storing frequent responses or embeddings to reduce redundant calls.

- Rate limiting: Preventing abuse or unexpected cost surges from excessive API calls.

The Role of Vector Databases

LLMs excel when paired with vector databases that store embeddings of documents, conversations, or knowledge bases. Tools such as Pinecone, Weaviate, and FAISS allow SaaS platforms to:

- Enable semantic search by retrieving the most relevant documents based on vector similarity.

- Power Retrieval-Augmented Generation (RAG), grounding model outputs in factual, up-to-date information.

- Maintain long-term memory for conversational applications.

Without vector databases, LLMs are limited to their training cut-off knowledge, which can cause hallucinations or outdated responses.

Middleware and Orchestration

To streamline integration, middleware frameworks such as LangChain and LlamaIndex are increasingly popular. These frameworks provide abstractions for:

- Chaining LLM calls together for multi-step reasoning.

- Integrating external tools (databases, APIs) into LLM workflows.

- Managing prompts, memory, and context windows effectively.

By using orchestration frameworks, SaaS providers can build more complex, reliable AI features without reinventing core infrastructure.

Data Management

Data is the lifeblood of any SaaS application, and integrating LLMs raises new considerations for how data is collected, processed, and secured.

Structured vs. Unstructured Data Ingestion

SaaS applications handle both structured data (CRM records, transactions, logs) and unstructured data (emails, chat transcripts, documents). LLMs are particularly powerful with unstructured data, but to maximize their utility, data pipelines must unify both types.

- Structured Data: Can be fed into LLM prompts for contextual grounding or transformed into natural language outputs.

- Unstructured Data: Requires preprocessing such as tokenization, cleaning, and embedding generation before being used effectively by LLMs.

Data Pipelines and Preprocessing

A robust data pipeline ensures that inputs fed into LLMs are accurate and secure. This includes:

- Deduplication and cleaning to prevent model confusion.

- Embedding generation for semantic search.

- Context window optimization (deciding which data to include in prompts).

Security, Privacy, and Regulatory Handling

SaaS providers must prioritize compliance frameworks when integrating LLMs:

- GDPR: Requires explicit consent and rights for users to control their data. Any data passed to third-party APIs must adhere to strict transfer protocols.

- HIPAA: For healthcare SaaS, Protected Health Information (PHI) must never be exposed without encryption and contractual assurances. Self-hosting often becomes mandatory in this domain.

- SOC 2: Ensures SaaS companies maintain strong controls over security, availability, and confidentiality.

- Data Residency Laws: In regions like the EU, data must remain within specific jurisdictions, influencing whether proprietary APIs can be used.

Mitigation strategies include anonymization, encryption, and adopting a zero-trust architecture where LLM services never persist sensitive inputs.

Choosing the right LLM, deploying it appropriately, and designing robust architectures and data pipelines are the cornerstones of successful integration. SaaS providers that prioritize compliance, optimize for latency and cost, and leverage supporting tools like vector databases and orchestration frameworks will not only reduce risks but also unlock transformative product features.

By establishing these technical foundations, SaaS companies position themselves to scale AI responsibly and effectively, ensuring that LLM-powered features become long-term assets rather than short-lived experiments.

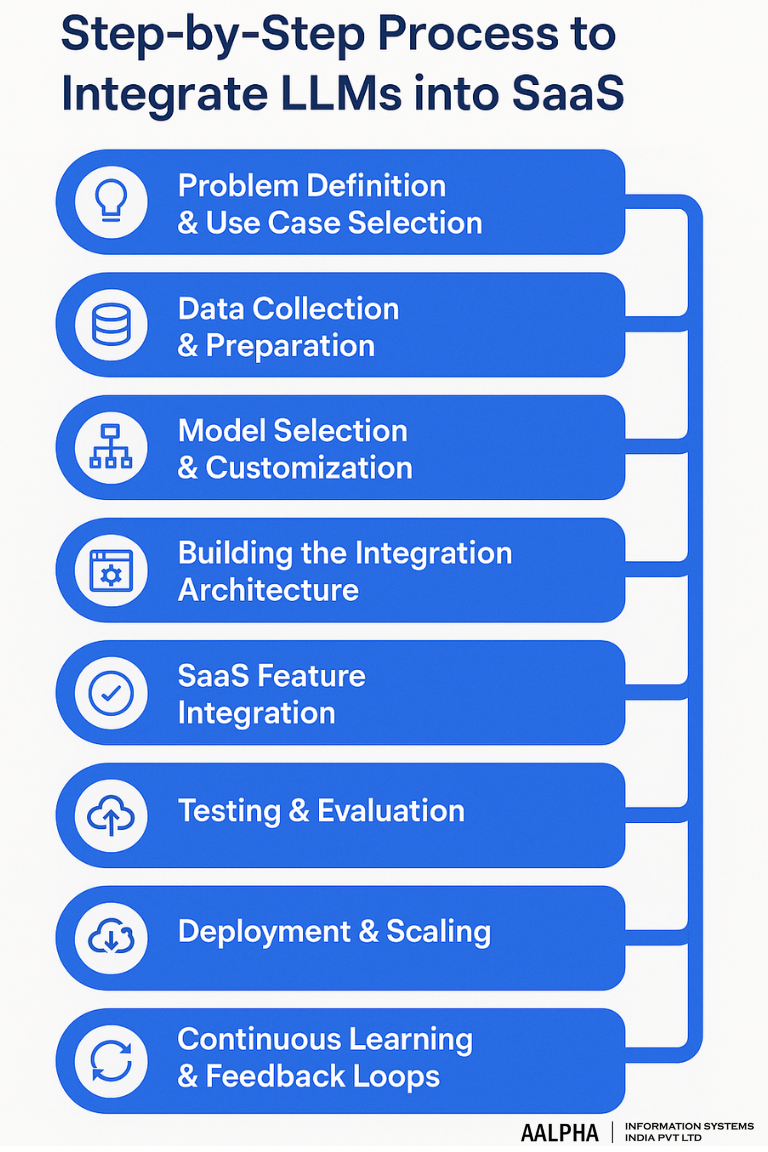

5. Step-by-Step Process to Integrate LLMs into SaaS

Integrating Large Language Models (LLMs) into SaaS applications requires more than plugging in an API. It is a structured, multi-stage process that begins with understanding business needs and ends with continuous refinement in production. Each step—from selecting the right use case to architecting workflows and monitoring performance—determines whether AI will deliver tangible value or become an expensive experiment.

Problem Definition & Use Case Selection

Every successful integration begins with clarity of purpose. Many SaaS companies rush into embedding LLMs because of market hype, but without a clearly defined use case, projects risk misalignment and cost overruns.

- Identify Pain Points: Start by mapping customer journeys and identifying repetitive, high-volume tasks where language is the bottleneck. For example, if a SaaS CRM platform finds that 40% of support tickets relate to repetitive inquiries, an LLM-powered chatbot may be a strong use case.

- Validate Business Value: Ask: Will this integration reduce churn, increase engagement, or create new monetizable features? For instance, GitHub Copilot succeeded not just because of technical novelty but because it directly improved developer productivity—a measurable KPI.

- Scope Early Experiments: Instead of attempting full-scale automation, design pilot projects. For example, a SaaS HR platform might start with an AI assistant that answers benefits-related FAQs before scaling to broader HR policy automation.

Choosing the right use case is about balancing impact vs. feasibility—start with narrow applications that show immediate ROI and scale progressively.

Data Collection & Preparation

LLMs are only as effective as the data they access. For SaaS providers, this means carefully curating and preparing both structured and unstructured data sources.

- Data Mapping: Identify what data is available within your SaaS platform. This may include documents, chat logs, CRM entries, analytics dashboards, or workflow histories.

- Structured vs. Unstructured Data: Structured data (like database records) can be transformed into prompt context, while unstructured data (like contracts or emails) benefits from embedding generation for semantic retrieval.

- Cleaning and Preprocessing: Remove duplicates, anonymize sensitive details, and normalize formats. Poor-quality data leads directly to poor model outputs.

- Data Security: From the outset, compliance must be prioritized. If integrating with third-party APIs, ensure personally identifiable information (PII) or protected health information (PHI) is masked.

Practical example: An EdTech SaaS building an AI tutor should preprocess student assessments and anonymize identifiers before feeding data into LLM pipelines.

Model Selection & Customization

The right LLM must be selected based on requirements such as latency, compliance, and feature complexity. But raw model choice is only the first step—customization is where value is unlocked.

Prompt Engineering

Prompt design dictates the quality of outputs. SaaS developers must craft system prompts (instructions that guide model behavior consistently) and dynamic prompts (contextual instructions fed per request).

- Example: In a FinTech SaaS generating compliance summaries, a system prompt might read: “You are a compliance officer. Always respond with concise, regulation-focused summaries and cite relevant legal references.”

Iterative testing of prompts ensures consistent tone, accuracy, and safety.

Fine-Tuning vs. Embeddings vs. RAG

- Fine-Tuning: Training the base LLM with proprietary datasets to align it with domain-specific needs. Best for SaaS where responses must follow consistent templates (e.g., legal contract drafting).

- Embeddings: Represent documents and conversations as numerical vectors stored in vector databases. Enables semantic search, ideal for SaaS knowledge bases.

- Retrieval-Augmented Generation (RAG): A hybrid method where the LLM retrieves relevant context from a database before generating a response. This mitigates hallucinations and ensures outputs are grounded in real SaaS data.

For most SaaS providers, RAG strikes the best balance of accuracy and efficiency. Fine-tuning may be considered only after the SaaS has stable workflows and significant proprietary datasets.

Building the Integration Architecture

With the use case, data, and model defined, the next step is designing a scalable architecture that connects the LLM to SaaS workflows.

- APIs

If using proprietary models (OpenAI, Anthropic, Cohere), SaaS platforms connect via REST APIs or SDKs. Developers should implement retry logic, error handling, and token usage monitoring to avoid cost overruns.

- Middleware

Frameworks like LangChain or LlamaIndex provide essential abstractions:

- Managing long prompts and context windows.

- Integrating LLMs with external databases or APIs.

- Chaining multi-step reasoning tasks.

- Orchestration Agents

For complex workflows, orchestration agents coordinate multiple LLM calls. For instance, a SaaS legal platform might chain together three LLM steps: retrieve precedents → summarize → draft response.

Microservices Approach

LLMs should be containerized as independent microservices. This allows SaaS providers to scale individual features (e.g., ticket triage vs. summarization) independently, optimizing both cost and reliability.

SaaS Feature Integration

Embedding LLMs into SaaS must enhance—not disrupt—the user experience. Features should feel natural within existing workflows.

- Chat Interfaces: Customer-facing LLM assistants can be embedded into dashboards as chat widgets. Example: An HR SaaS chatbot that answers “What is our vacation policy?”

- Dashboards: LLMs can generate natural-language summaries directly within analytics dashboards. Example: A marketing SaaS dashboard that auto-summarizes campaign ROI trends.

- Forms and Input Fields: LLMs can assist in real time by suggesting responses, auto-filling data, or reformatting entries. Example: A CRM SaaS that helps sales reps draft personalized follow-up emails.

A principle to follow: augment existing workflows before inventing new ones. Users adopt LLM-powered features more readily when they enhance familiar experiences.

Testing & Evaluation

Rigorous evaluation is critical before rollout. Unlike traditional SaaS features, LLMs require multi-dimensional testing.

- Accuracy: Validate responses against ground truth. For customer support SaaS, this means comparing LLM answers with verified support documentation.

- Latency: Measure response times under load. Users expect SaaS applications to respond within 1–2 seconds; anything slower may cause frustration.

- Hallucinations: Track how often the LLM generates factually incorrect responses. Mitigation strategies include grounding via RAG, tighter prompts, or human-in-the-loop oversight.

- Bias and Safety: Evaluate whether outputs are biased, offensive, or misleading, especially in regulated sectors.

Benchmarking against human responses and gathering structured feedback from pilot users ensures reliability before scaling.

Deployment & Scaling

Moving from pilot to production requires robust deployment strategies.

- Cloud Deployment: Most SaaS companies deploy LLM integrations on major cloud providers like AWS, Azure, or GCP, leveraging GPU-optimized instances (e.g., NVIDIA A100).

- Hybrid Deployment: Compliance-sensitive tasks may require self-hosted models (on private cloud or on-premises) alongside API-based deployments for general-purpose tasks.

- Monitoring: Continuous monitoring of token usage, latency, error rates, and accuracy is critical. MLOps platforms or custom dashboards can provide visibility.

- Cost Management: Token-based pricing can escalate quickly. Tactics include:

- Caching frequent responses.

- Truncating unnecessary context from prompts.

- Using smaller, cheaper models for non-critical tasks.

- Caching frequent responses.

Scaling is not just about throughput but about maintaining predictable costs and consistent quality as usage grows.

Continuous Learning & Feedback Loops

The integration journey doesn’t end at deployment. LLM-powered SaaS features require ongoing refinement.

- User Feedback: Collect structured feedback directly in the UI (e.g., thumbs up/down on chatbot responses). Feed this back into retraining or prompt optimization.

- Human-in-the-Loop (HITL): For critical outputs (e.g., legal or healthcare), involve human reviewers to validate model suggestions before final delivery.

- Model Updates: Proprietary providers frequently update models, which may alter performance. SaaS companies must re-test features after each update.

- Drift Monitoring: Over time, user data and contexts evolve. Monitoring drift ensures that the LLM continues to perform accurately under changing conditions.

The most successful SaaS companies treat LLM integration as a living system—constantly monitored, improved, and aligned with business outcomes.

The step-by-step process demonstrates that LLM integration is not a one-time engineering task but a strategic, ongoing initiative. By carefully defining problems, preparing data, selecting models, and architecting scalable systems, SaaS companies can embed intelligence into their products responsibly and profitably. From there, continuous testing, monitoring, and feedback ensure that these features remain reliable, cost-effective, and aligned with customer needs.

The result is not just an AI feature bolted onto SaaS, but a SaaS platform that evolves into an AI-native ecosystem—capable of adapting to user needs in real time.

Challenges and Risks in LLM Integration

While Large Language Models (LLMs) bring transformative potential to SaaS, their integration is not without significant challenges. Many of these risks stem from the very nature of LLMs: probabilistic systems trained on massive, imperfect datasets and dependent on costly infrastructure. For SaaS providers, which operate in competitive and highly regulated markets, overlooking these issues can result in degraded user experience, spiraling costs, or even compliance failures. Understanding these risks—and planning for mitigation—is essential to building reliable AI-driven SaaS applications.

-

Data Quality & Bias in Outputs

LLMs are only as good as the data they are trained on. Since most modern LLMs are trained on large-scale internet data, they inherit biases, inaccuracies, and gaps in representation. This can manifest in SaaS platforms in troubling ways:

- Bias in Customer-Facing Outputs: An HR SaaS that uses LLMs to review résumés may unintentionally produce biased assessments, disadvantaging certain demographics.

- Inconsistent Quality: Poorly curated prompts or noisy input data can lead to contradictory outputs across different sessions, undermining user trust.

Mitigation Strategies:

- Use domain-specific fine-tuning to align outputs with industry standards.

- Employ content moderation pipelines that detect and filter harmful outputs before they reach end users.

- Continuously monitor for systemic bias using benchmark datasets.

Bias cannot be eliminated entirely, but SaaS providers can minimize its impact through vigilance and corrective feedback loops.

Hallucination Risks and Mitigation Strategies

A persistent issue with LLMs is hallucination—the generation of plausible but factually incorrect content. In consumer applications this may be tolerated, but in SaaS, where outputs often drive decisions, hallucinations can be damaging or even dangerous.

- Examples in SaaS:

- A healthcare SaaS chatbot providing inaccurate dosage information.

- A legal SaaS summarizing a nonexistent case precedent.

- A healthcare SaaS chatbot providing inaccurate dosage information.

Mitigation Strategies:

- Adopt Retrieval-Augmented Generation (RAG) to ground outputs in verified SaaS data.

- Implement guardrails in prompts (e.g., instructing models not to guess when uncertain).

- Use confidence scoring to flag outputs that require human review before delivery.

Hallucinations are an inherent limitation of generative AI, making it critical for SaaS providers to combine LLMs with deterministic safeguards.

Latency and Scalability Bottlenecks

SaaS users expect fast, responsive interactions. However, large models often struggle with inference speed, especially under heavy workloads. Latency can erode user satisfaction and adoption rates.

Challenges:

-

- Proprietary APIs introduce round-trip delays, especially if hosted outside the user’s region.

- Self-hosted models may require expensive GPU clusters to achieve low latency at scale.

- Peak demand periods can overwhelm infrastructure, creating service outages.

- Proprietary APIs introduce round-trip delays, especially if hosted outside the user’s region.

Mitigation Strategies:

- Implement caching for repeated queries and embedding lookups.

- Use smaller distilled models for less critical tasks while reserving larger LLMs for complex queries.

- Employ autoscaling on cloud platforms (AWS, Azure, GCP) to dynamically adjust resources.

The trade-off is unavoidable: higher accuracy often means slower inference. SaaS providers must balance speed and reliability with intelligent architecture.

Cost Unpredictability (API vs. Self-Hosted)

One of the most cited risks in SaaS-LLM integration is the unpredictability of costs. Proprietary LLM APIs typically bill per token (input + output), which can make expenses volatile as usage scales.

- Example: A SaaS support chatbot that averages 500 tokens per conversation could incur millions in monthly costs once scaled to enterprise customers.

Self-hosting avoids token-based billing but introduces significant upfront infrastructure costs: GPUs, engineering expertise, and ongoing maintenance.

Mitigation Strategies:

- Track usage metrics early with detailed monitoring dashboards.

- Apply prompt optimization to minimize unnecessary tokens.

- Cache responses to reduce redundant API calls.

- Consider hybrid strategies, using APIs for general-purpose tasks and self-hosted models for high-volume or sensitive workloads.

Without cost management, LLM integration can quickly shift from value-add to financial burden.

Compliance and Ethical Concerns

SaaS providers often operate in industries bound by strict regulations. Integrating LLMs introduces complex compliance challenges:

- Data Privacy: Passing personal or sensitive data through third-party APIs may violate GDPR or HIPAA.

- Data Residency: Some jurisdictions require data to remain within geographic boundaries, conflicting with global LLM APIs.

- Explainability: Regulators may demand that AI decisions be interpretable, a weakness of black-box LLMs.

Beyond compliance, ethical concerns—such as transparency in AI usage and the impact on human jobs—can affect brand trust.

Mitigation Strategies:

- Adopt privacy-first design: anonymize or encrypt sensitive data before processing.

- Publish AI usage disclosures in SaaS terms of service.

- Favor self-hosted LLMs for highly regulated industries.

- Establish an AI ethics board or governance framework within the organization.

Compliance is not just a legal obligation; it is a competitive differentiator for SaaS providers looking to win enterprise clients.

Human Oversight in Mission-Critical SaaS

Finally, SaaS companies must recognize that LLMs cannot yet operate autonomously in mission-critical contexts. Over-reliance on AI can expose users to unacceptable risks.

Examples:

-

- A FinTech SaaS allowing an LLM to auto-approve loan applications without human review.

- A healthcare SaaS using an LLM to recommend treatments without clinician oversight.

- A FinTech SaaS allowing an LLM to auto-approve loan applications without human review.

While automation is appealing, trust in SaaS platforms depends on accountability. Human-in-the-loop (HITL) mechanisms remain essential in high-stakes workflows.

Mitigation Strategies:

- Implement review checkpoints where human agents validate AI-generated outputs.

- Allow user overrides so customers can correct or reject model outputs.

- Train staff to interpret LLM responses critically rather than blindly relying on them.

This hybrid approach—AI assistance with human validation—strikes the right balance between efficiency and safety.

LLM integration into SaaS is not a frictionless process. Data quality, hallucinations, latency, cost unpredictability, compliance, and the necessity of human oversight represent substantial hurdles. Yet, these challenges are not insurmountable. By recognizing them early and applying structured mitigation strategies—such as retrieval grounding, caching, hybrid deployment, and governance frameworks—SaaS providers can turn potential liabilities into long-term strengths.

The companies that succeed will be those that treat LLMs not as magic solutions but as powerful tools requiring discipline, guardrails, and continuous oversight.

7. Best Practices for Successful LLM Integration

For SaaS providers, adopting Large Language Models (LLMs) is not just a technical upgrade—it is a strategic shift. Poorly designed integrations can frustrate users, create compliance liabilities, or generate unsustainable costs. By contrast, well-planned deployments can enhance user experience, reduce operational burdens, and establish competitive differentiation. The following best practices provide a framework for embedding LLMs into SaaS applications in a way that is user-friendly, cost-effective, and future-proof.

-

Aligning AI Roadmap with SaaS Strategy

The first principle of successful integration is alignment. LLM adoption should not be a reactive response to market hype but a deliberate extension of the SaaS company’s strategic vision.

- Tie AI to Core Value Propositions: If a SaaS platform differentiates on customer experience, LLMs should enhance personalization or support. If it competes on analytics, LLMs should enrich reporting and insights.

- Define KPIs Early: Establish clear metrics—such as reduced ticket resolution time, higher user retention, or increased upsell rates—that demonstrate the value of LLM features.

- Iterative Roadmapping: Start with narrow use cases that address visible pain points, then expand gradually as data, infrastructure, and user trust mature.

Without this alignment, companies risk building “AI features” that impress in demos but fail to deliver long-term business impact.

-

User-Centric Design: Embedding LLMs into Workflows

An LLM is valuable only if it integrates seamlessly into how users already interact with the SaaS product. The goal should be to augment familiar workflows, not introduce entirely new paradigms that confuse users.

- Contextual Integration: Place LLM features within the natural flow of work. For example, in a project management SaaS, an LLM should suggest task descriptions directly in the task creation form, not in a separate interface.

- Progressive Disclosure: Begin with subtle assistance—such as suggested replies or summaries—and expand to more proactive automation as users build trust.

- User Feedback Loops: Allow users to rate or correct outputs directly. This not only improves engagement but also creates valuable training data for refining prompts or fine-tuning.

SaaS success depends on usability. Treating the LLM as a “copilot” that enhances user workflows ensures adoption without resistance.

-

Guardrails: System Prompts, Fine-Tuning, and Feedback

LLMs are probabilistic and prone to errors if left unconstrained. Effective guardrails are essential for ensuring safe, consistent, and reliable outputs.

- System Prompts: Use persistent instructions to define tone, style, and boundaries. Example: “Always answer in a professional, concise manner and cite relevant internal documentation when possible.”

- Fine-Tuning: For domain-specific SaaS, fine-tuning the model on proprietary datasets aligns it more closely with industry terminology and reduces variance.

- Feedback Integration: Create mechanisms for continuous improvement. If a customer flags an inaccurate chatbot response, that feedback should feed back into prompt refinement or retraining pipelines.

Guardrails transform LLMs from “black boxes” into predictable, trusted components of SaaS platforms.

-

Hybrid Models: When to Mix Rules-Based Logic with LLMs

Not every task requires a generative model. In many cases, rules-based logic or deterministic workflows remain more efficient, reliable, and explainable.

- Simple Tasks: Password resets, status updates, and data validation should use rule-based logic. LLMs are overkill here.

- Complex, Contextual Tasks: Summarizing documents, answering nuanced questions, or generating drafts are better suited to LLMs.

- Fallback Systems: When an LLM is uncertain (low confidence score), the system should default to deterministic responses or escalate to human review.

This hybrid approach ensures SaaS platforms balance innovation with reliability, avoiding both unnecessary complexity and user frustration.

-

Cost Optimization: Batching, Caching, and Quantization

One of the most common pitfalls in SaaS-LLM integration is runaway costs. Token-based billing for proprietary APIs or GPU requirements for self-hosted models can quickly erode margins. Proactive cost management is non-negotiable.

- Batching: Combine multiple small requests into a single API call to reduce token overhead.

- Caching: Store frequent queries and embeddings in memory or vector databases to prevent repetitive computation.

- Prompt Optimization: Eliminate unnecessary context in prompts to reduce token counts.

- Model Quantization: For self-hosted LLMs, compressing models into lower-precision formats (e.g., INT8) reduces compute costs while maintaining acceptable accuracy.

- Tiered Models: Use smaller models for low-stakes tasks and reserve large LLMs for high-value workloads.

By treating cost optimization as a design principle, SaaS providers ensure scalability does not come at the expense of profitability.

-

MLOps for SaaS: Monitoring Drift, Scaling Responsibly

Deploying an LLM is not the finish line—it is the beginning of a continuous lifecycle. SaaS companies must adopt MLOps practices to keep LLM-powered features reliable and relevant.

- Model Drift Monitoring: Over time, data distributions and user behavior shift. Monitoring drift ensures that the LLM continues to produce accurate results.

- Performance Dashboards: Track latency, token usage, error rates, and user satisfaction through centralized dashboards.

- Automated Retraining Pipelines: For SaaS with dynamic data (e.g., support tickets, contracts), periodic retraining ensures the LLM remains aligned with evolving contexts.

- Controlled Scaling: Scale infrastructure gradually to manage costs and avoid overwhelming cloud resources. Autoscaling clusters on AWS, Azure, or GCP can adjust GPU resources dynamically.

- Compliance Auditing: Regular audits ensure outputs and data handling remain compliant with frameworks like GDPR, HIPAA, and SOC 2.

Adopting MLOps ensures that LLM integration matures from a pilot experiment into a sustainable, enterprise-grade feature.

Best practices for LLM integration extend beyond technical decisions—they require aligning strategy, prioritizing user experience, and building safeguards against risks. By embedding AI features thoughtfully into workflows, setting clear guardrails, balancing LLMs with rules-based systems, optimizing costs, and maintaining MLOps discipline, SaaS providers can build AI-driven platforms that are both innovative and resilient.

In an environment where customer expectations are rising and competitors are racing to add AI features, these practices are not optional—they are essential to ensuring that LLM integration becomes a long-term competitive advantage rather than a short-lived novelty.

8. Future of LLMs in SaaS

The integration of Large Language Models (LLMs) into SaaS is only the beginning of a broader transformation in how cloud software is conceived, built, and delivered. Over the next decade, LLMs will evolve from being embedded features to becoming the foundation of new SaaS paradigms. Companies that anticipate these shifts will not only stay competitive but also redefine their industries.

-

The Rise of Agentic SaaS Platforms

The next wave of SaaS will likely move from AI-enhanced workflows to AI-driven agents that act autonomously within software environments. Instead of a static chatbot or summarizer, SaaS applications will include multi-step “agents” that can reason, plan, and execute tasks across interconnected services. This shift highlights the importance of integrating AI agents into a SaaS platform, enabling businesses to deliver proactive, intelligent automation at scale.

For example:

- In project management SaaS, an AI agent could proactively assign tasks, monitor deadlines, and escalate risks without user prompts.

- In HR SaaS, agents could conduct onboarding by scheduling meetings, setting up accounts, and generating compliance documents automatically.

- In customer support SaaS, autonomous agents could not only resolve tickets but also identify emerging issues, update documentation, and alert product teams.

This agentic approach will transform SaaS from being reactive (waiting for user input) to proactive (anticipating user needs). It also opens opportunities for SaaS companies to monetize AI-powered automation layers as premium subscriptions.

-

Open-Source LLM Adoption vs. Closed APIs

The tension between open-source and proprietary models will define SaaS adoption strategies in the coming years.

- Closed APIs (e.g., OpenAI, Anthropic, Google): These will continue to dominate early adoption due to ease of integration, state-of-the-art performance, and enterprise support. SaaS companies that prioritize rapid feature rollouts will likely prefer this path.

- Open-Source Models (e.g., LLaMA, Mistral, Falcon): These will gain traction among SaaS providers focused on compliance, customization, and cost control. Open-source LLMs allow for fine-tuning, on-premises hosting, and greater transparency in sensitive industries like healthcare, finance, or defense.

- Hybrid Future: Many SaaS providers will adopt hybrid strategies, using proprietary APIs for general-purpose tasks while relying on open-source models for domain-specific or compliance-sensitive functions.

As infrastructure and tooling for open-source models mature (through platforms like Hugging Face or specialized LLMOps services), their share of SaaS adoption will expand significantly.

-

The Rise of Multi-Modal SaaS

Today’s SaaS applications largely focus on text and structured data. But the future lies in multi-modal integration, where LLMs process and generate across text, images, audio, and video.

- Text + Images: Design SaaS platforms may embed LLMs that can generate marketing copy alongside AI-driven visuals, unifying creative workflows.

- Text + Voice: Customer service SaaS could use LLMs to power intelligent voice assistants that handle phone calls, reducing call center dependency.

- Text + Video: EdTech SaaS may deliver interactive lessons where LLMs generate personalized transcripts, questions, and even real-time video explanations.

- Enterprise Applications: Multi-modal SaaS platforms will allow users to query across data formats seamlessly—asking “Summarize the key points from this sales video and generate an email draft for follow-up.”

Multi-modal capabilities will move SaaS beyond productivity into immersive, adaptive digital ecosystems.

-

Predictions for the Next 5–10 Years

Looking forward, several trends are likely to define the trajectory of LLMs in SaaS:

- LLMs as Core Infrastructure: Just as cloud computing became a standard, LLMs will evolve into a core layer of SaaS architecture, powering search, personalization, and automation by default.

- Domain-Specific Small Models: Rather than relying only on giant general-purpose models, SaaS companies will increasingly deploy smaller, fine-tuned models tailored to specific domains. These “specialist” models will be cheaper, faster, and more reliable for focused tasks.

- AI-Native SaaS Companies: Entire SaaS platforms will be built around LLM capabilities rather than bolting them on. Jasper is an early example, but expect SaaS startups in healthcare, logistics, and education that exist solely because LLMs enable their business model.

- Standardized AI Compliance Frameworks: Just as SOC 2 became a baseline for SaaS, new compliance certifications will emerge specifically for AI-powered applications, ensuring responsible data use, transparency, and bias monitoring.

- Agent Ecosystems: Instead of single SaaS applications, we may see ecosystems of interoperable AI agents spanning multiple SaaS platforms. For instance, a finance agent in an ERP SaaS might coordinate directly with a supply chain agent in a logistics SaaS to optimize business operations.

- User Expectation Shift: Over time, AI features will no longer be considered “premium.” They will become baseline expectations, just as mobile access or cloud syncing did in earlier SaaS generations. Providers that fail to evolve will struggle to compete.

The future of LLMs in SaaS is not about incremental improvements but about structural transformation. Agentic SaaS platforms will redefine workflows, open-source adoption will reshape deployment strategies, and multi-modal capabilities will broaden what SaaS can deliver. Over the next decade, the distinction between “SaaS” and “AI-driven SaaS” will disappear—because every SaaS will, in some form, become an AI-native platform.

For SaaS providers, the question is not whether to integrate LLMs, but how quickly they can adapt to ensure relevance in this accelerating AI-first landscape.

Conclusion

Large Language Models are no longer experimental technologies—they are becoming embedded infrastructure for the next generation of SaaS. What makes them transformative is not their ability to generate text in isolation, but their capacity to reshape the way software is designed, delivered, and experienced. SaaS is fundamentally about scalability, accessibility, and adaptability, and LLMs amplify each of these qualities by making products smarter, more responsive, and more aligned with human interaction.

The companies that will thrive in the coming decade are those that view LLMs not as bolt-on features but as a design philosophy. Integrating them into SaaS requires careful attention to architecture, compliance, and cost management, yet the rewards are equally substantial: SaaS products that anticipate user needs, automate repetitive work, and deliver adaptive, personalized outcomes at scale. The challenge is not about whether to implement LLMs but about how to do so responsibly, sustainably, and in ways that align with real user problems.

At Aalpha Information Systems, we help SaaS businesses translate this potential into working reality. With decades of experience in building scalable software platforms and deep expertise in AI, we design solutions that move beyond surface-level integrations. Whether it is orchestrating Retrieval-Augmented Generation pipelines, fine-tuning domain-specific models, or embedding AI features seamlessly into SaaS workflows, our focus is on building systems that are technically sound, compliant with global regulations, and optimized for business outcomes.

What sets Aalpha apart is our ability to balance technical precision with strategic guidance. We work with SaaS providers to identify the use cases that matter most, architect infrastructures that scale predictably, and deploy AI features that enhance—not disrupt—user experience. Our teams understand that AI integration is as much about governance, cost control, and security as it is about algorithms. This is why SaaS companies across industries trust us to build platforms that are both innovative and resilient.

As the SaaS market evolves toward an AI-first future, the question is how your platform will differentiate. With Aalpha as your technology partner, you gain not only cutting-edge engineering capabilities but also a strategic framework to ensure your AI investments deliver measurable value. The next generation of SaaS will be defined by intelligence, adaptability, and trust—and we are here to help you build it.

FAQs

What are the costs of integrating LLMs into SaaS?

Costs depend on deployment. API-based models (OpenAI, Anthropic) are quick to adopt but bill per token, making expenses scale with usage. Self-hosted models (LLaMA, Mistral) require GPU infrastructure and ML expertise, but can be more predictable at scale. SaaS companies should also account for integration, monitoring, and compliance costs.

Can small SaaS startups adopt LLMs affordably?

Yes. Startups can begin with API-first integrations to validate demand, limit token usage through caching and narrow features, and later migrate to hybrid or self-hosted setups. This approach reduces upfront investment while allowing quick market entry.

How are LLMs different from traditional NLP or chatbots?

Traditional chatbots rely on rules or intent classifiers, making them rigid and brittle. LLMs, by contrast, are context-aware and adaptive, handling nuanced queries without extensive reprogramming. For SaaS, this means moving from static FAQ bots to dynamic assistants that understand intent and reasoning.

What industries benefit most from LLM-powered SaaS?

LLMs deliver value wherever text-heavy, compliance-driven, or customer-facing processes exist:

- Healthcare: Patient education, note summarization.

- Finance: Fraud detection, compliance reports.

- Education: AI tutors, personalized feedback.

- Legal: Contract review, case summarization.

- CX Platforms: Chatbots, ticket triage, knowledge bases.

How do you ensure data security and compliance with LLMs?

Best practices include anonymizing sensitive data, encrypting pipelines, enforcing access controls, and adopting compliance frameworks like GDPR, HIPAA, and SOC 2. For sensitive industries, self-hosted open-source LLMs are often safer than third-party APIs.

Should SaaS apps use API-based LLMs or self-hosted models?

- APIs: Best for quick adoption, lower engineering overhead, but costs rise with scale and compliance may be limited.

- Self-hosted: Better for large-scale or regulated SaaS, with full control and fine-tuning options, though requiring more resources.

- Hybrid: Many SaaS platforms combine both—APIs for general tasks and self-hosted models for sensitive workflows.

Can LLMs handle real-time SaaS workloads?

Yes, but optimization is key. Large models can be slow under heavy load. Solutions include smaller distilled models for quick tasks, caching, batching, and autoscaling GPU clusters. With the right architecture, LLMs can support real-time experiences like chat, analytics, and coding assistance.

LLMs bring clear advantages to SaaS, but costs, compliance, deployment choices, and workload performance must be carefully managed. The right path depends on company size, industry, and user needs. With thoughtful planning, even small SaaS startups can build powerful AI-driven features affordably.

Transform your SaaS into an AI-native platform with Aalpha’s expertise in LLM integration. Partner with us to design secure, scalable, and cost-effective AI solutions that deliver real business impact.